Your marketing VP just asked: “What’s our ROI on AI search?” You stare at scattered spreadsheets tracking citations from three platforms, manual notes about positioning, and gut feelings about competitive performance. No framework. No system. No answer.

This measurement chaos is killing strategic decision-making across organizations. AI search measurement frameworks aren’t nice-to-haves anymore—they’re the infrastructure separating companies that adapt successfully from those guessing their way through the AI revolution.

Table of Contents

ToggleWhat Are AI Search Measurement Frameworks

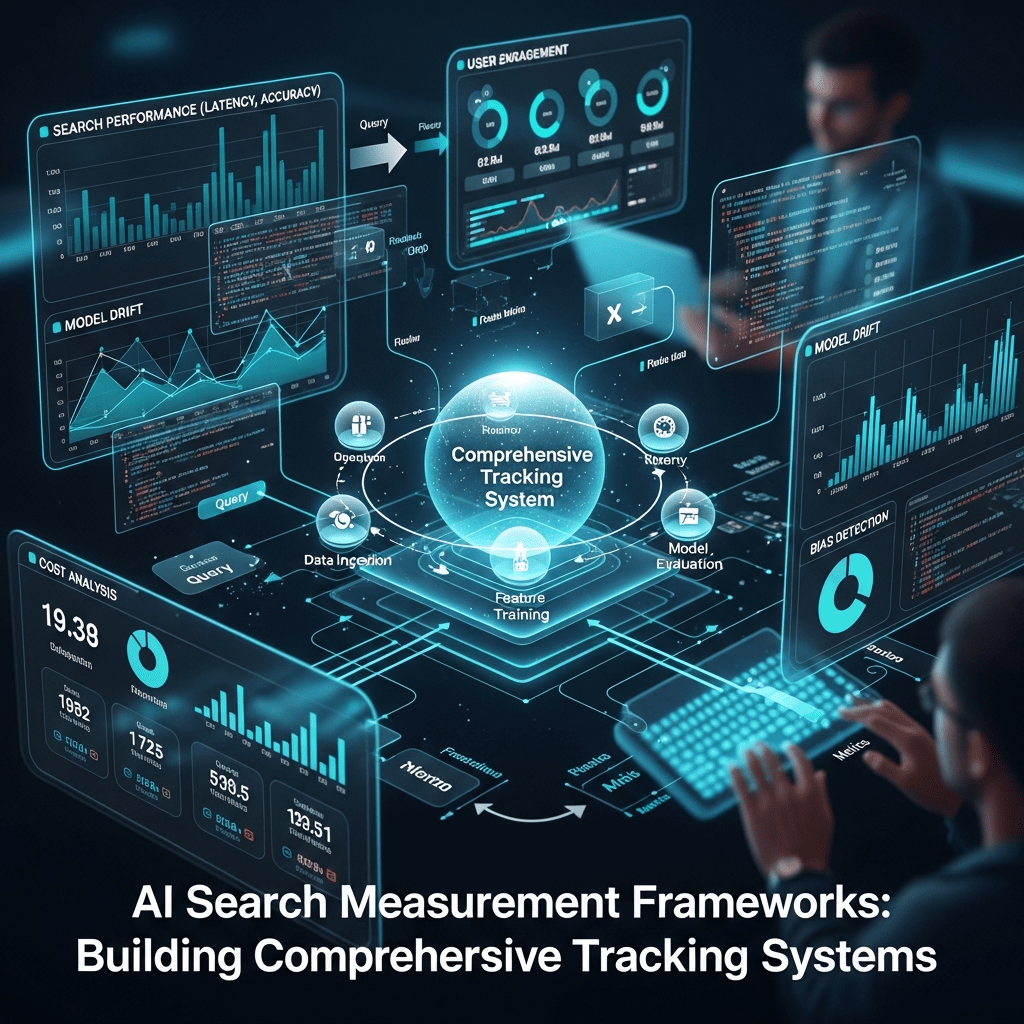

AI search measurement frameworks are structured, systematic approaches to collecting, organizing, analyzing, and acting on AI search performance data across generative platforms, integrating multiple metrics into cohesive measurement systems that drive strategic decisions.

Think of frameworks as the architecture underlying your measurement efforts. Individual metrics are data points. Frameworks are the blueprints that organize those points into actionable intelligence.

Effective frameworks include:

- Metric hierarchies defining primary, secondary, and tertiary KPIs

- Data collection protocols ensuring consistent, reliable measurement

- Analysis methodologies transforming data into insights

- Decision rules connecting insights to actions

- Integration points linking AI metrics with business outcomes

According to Gartner’s 2024 research, 70% of enterprises will use generative AI by 2025, yet only 23% have established measurement frameworks. That gap represents both opportunity and vulnerability.

Why Ad Hoc Measurement Fails

Most organizations start AI tracking reactively: “Let’s check if ChatGPT mentions us.” Then Perplexity. Then Google AI Overviews. Each platform gets tracked differently, at different frequencies, with incompatible metrics.

This measurement patchwork creates critical problems:

Strategic Blindspots emerge when inconsistent tracking misses important patterns. You notice citation declines on ChatGPT but miss simultaneous gains on Perplexity, misinterpreting overall performance.

Resource Waste compounds as teams duplicate efforts, track vanity metrics, and analyze data that doesn’t influence decisions. Without frameworks, 60-70% of measurement effort produces zero strategic value.

Decision Paralysis sets in when executives face contradictory metrics without clear hierarchy. Citations up but positioning down. One platform strong, another weak. Which matters more?

BrightEdge research found companies with formalized measurement frameworks make strategic pivots 4.1x faster than those with ad hoc tracking. Structure accelerates decision velocity.

Core Framework Components

Metric Hierarchy and Prioritization

The foundation of any measurement framework GEO is clear metric prioritization defining what matters most:

Primary Metrics (Track Weekly)

- Citation frequency rate across target queries

- Share of voice versus top 3 competitors

- Average citation position

- Citation quality score (context + positioning + accuracy)

Secondary Metrics (Track Monthly)

- Query coverage percentage

- Multi-platform presence rate

- Citation durability index

- Competitive win rate (positioning)

Tertiary Metrics (Track Quarterly)

- Citation context evolution

- Platform-specific penetration rates

- Content type performance distribution

- Time-to-citation velocity

Business Outcome Metrics (Track Monthly)

- Brand search volume changes

- AI-attributed pipeline contribution

- Customer acquisition cost shifts

- Market share movements

This hierarchy prevents tracking everything equally (which means nothing gets attention) while ensuring critical metrics receive consistent monitoring.

Data Collection Architecture

Systematic AI tracking systems require standardized collection protocols:

Query Universe Definition

- 50-100 core queries tracked weekly (high-value, high-frequency)

- 100-200 secondary queries tracked monthly (medium-value, category coverage)

- 200-500 long-tail queries tracked quarterly (opportunity identification)

Platform Coverage

- ChatGPT (web browsing enabled when testing)

- Perplexity AI (primary focus due to transparent citations)

- Google AI Overviews (requires authenticated Google Search)

- Claude (secondary, harder to track systematically)

Collection Frequency

- High-priority queries: Weekly automated collection

- Core query set: Monthly comprehensive analysis

- Competitive benchmarks: Monthly parallel tracking

- Deep-dive audits: Quarterly manual validation

Data Validation

- Monthly spot-checks comparing automated vs manual results

- Quarterly inter-rater reliability testing for qualitative metrics

- Continuous monitoring for collection errors or platform changes

Consistent collection protocols ensure trend data reliability. Changing methodology mid-stream destroys comparability.

Analysis Methodologies

Raw data means nothing without comprehensive AI analytics frameworks:

Trend Analysis

- Month-over-month changes in primary metrics

- Quarter-over-quarter strategic shifts

- Year-over-year competitive positioning evolution

- Velocity calculations (rate of change in key metrics)

Comparative Analysis

- Your performance vs. top 3 competitors

- Platform-specific performance variations

- Query category performance distribution

- Content type effectiveness comparison

Correlation Analysis

- AI metrics vs. traditional SEO performance

- Citation metrics vs. business outcomes (leads, revenue, market share)

- Investment levels vs. improvement rates

- Competitive activity vs. your performance shifts

Predictive Modeling

- Forecasting citation rates based on content velocity

- Predicting share of voice shifts from competitive activity

- Modeling business impact from positioning improvements

- Identifying early warning signals for competitive displacement

Analysis transforms measurement from historical reporting into strategic intelligence.

Decision Rules and Action Triggers

Frameworks must connect measurement to action through explicit structured measurement AI decision rules:

Defensive Triggers (Prevent Deterioration)

- If share of voice declines >5% monthly → Competitive analysis sprint

- If average position drops >0.5 → Content authority audit

- If citation frequency falls >15% → Platform-specific investigation

- If negative citations appear 3+ times → Reputation response protocol

Offensive Triggers (Capitalize on Opportunities)

- If competitor SOV drops >10% → Aggressive content targeting their weaknesses

- If new query categories emerge → Early-mover content creation

- If positioning improves >1.0 in category → Double down with additional content

- If multi-platform presence hits >50% → Feature in sales/marketing materials

Investment Triggers (Resource Allocation)

- If primary metrics stagnate 3+ months → Increase content budget 25%

- If competitive gaps >20 points SOV → Hire specialized AI SEO resources

- If measurement scalability issues → Invest in enterprise tracking platforms

- If business correlation unclear → Implement attribution modeling

Decision rules prevent analysis paralysis and ensure measurement drives action consistently.

Building Framework Maturity Models

Stage 1: Foundation (Months 1-3)

Measurement Capabilities

- Manual tracking of 20-30 highest-priority queries

- Basic citation frequency and positioning documentation

- Simple competitive comparison (you vs. top competitor)

- Spreadsheet-based data management

Analysis Capabilities

- Month-over-month trending for core metrics

- Basic share of voice calculation

- Identification of obvious competitive gaps

Decision Capabilities

- Qualitative assessment of what’s working/not working

- Content prioritization based on clear opportunities

- Quarterly strategic reviews

Resource Requirements

- 4-6 hours weekly for data collection and analysis

- 1 person with AI search knowledge

- Free tools (spreadsheets, browser testing)

This foundation stage establishes baseline data and validates which metrics matter before investing in sophisticated infrastructure.

Stage 2: Systematization (Months 4-9)

Measurement Capabilities

- Semi-automated tracking of 50-100 queries

- Structured quality scoring (context, positioning, accuracy)

- Top 3 competitor benchmarking

- Database for historical trend analysis

Analysis Capabilities

- Velocity calculations and trend forecasting

- Platform-specific performance breakdowns

- Query category comparative analysis

- Correlation analysis with traditional SEO metrics

Decision Capabilities

- Documented decision rules for common scenarios

- Monthly strategic reviews with clear action items

- Content optimization based on performance patterns

Resource Requirements

- 8-12 hours weekly for collection, analysis, reporting

- 1-2 people with technical and strategic skills

- Semi-automated tools ($200-500/month)

- Developer time for custom automation (20-40 hours)

Systematization creates repeatable processes and reduces manual effort through selective automation.

Stage 3: Sophistication (Months 10-18)

Measurement Capabilities

- Automated tracking of 200+ queries across all platforms

- Advanced quality metrics (framing, durability, context evolution)

- Comprehensive competitive intelligence (5-8 competitors)

- Integration with business intelligence systems

Analysis Capabilities

- Predictive modeling of performance trajectories

- Attribution modeling connecting AI metrics to revenue

- Competitive displacement early warning systems

- ROI analysis by content type and topic

Decision Capabilities

- Automated alerting for significant metric shifts

- Real-time dashboards for executive visibility

- AI-informed content calendars and prioritization

- Quarterly board-level strategic reporting

Resource Requirements

- 15-20 hours weekly for analysis and strategy (automation handles collection)

- 2-3 person dedicated team

- Enterprise platforms ($5,000-15,000+ annually)

- Ongoing development for custom integrations

Sophisticated frameworks treat AI search as a core channel with dedicated resources and executive visibility.

Stage 4: Optimization (Months 18+)

Measurement Capabilities

- Real-time tracking across emerging platforms

- Advanced attribution modeling with multi-touch analysis

- Predictive competitive intelligence

- AI-powered anomaly detection and insights

Analysis Capabilities

- Machine learning models predicting optimal content strategies

- Scenario modeling for strategic planning

- Cross-channel integration (AI search + traditional + social + paid)

- Cohort analysis of AI-influenced customer segments

Decision Capabilities

- AI-driven content recommendations

- Dynamic resource allocation based on performance

- Proactive competitive countermeasures

- Integration with product and sales strategies

Resource Requirements

- Full-time team (3-5 people)

- Custom enterprise solutions or agency partnerships

- Significant technology investment ($25,000-100,000+ annually)

- Executive sponsorship and organizational integration

Optimization-stage frameworks treat AI search measurement as strategic infrastructure comparable to traditional analytics investments.

Integration with Existing Marketing Analytics

Connecting AI and Traditional SEO Metrics

Your framework building AI must bridge AI and traditional search measurement:

Correlation Analysis

- Do improving AI citations predict rising organic rankings?

- Does citation positioning correlate with featured snippet capture?

- How do AI platforms treat content that ranks well traditionally?

Unified Dashboards

- Side-by-side comparison of AI citations and organic traffic

- Share of voice in AI vs. impression share in traditional search

- Combined visibility scores incorporating both channels

Resource Allocation Models

- Investment tradeoffs between traditional SEO and AI optimization

- Content ROI comparison across both channels

- Audience-based channel prioritization

One enterprise found their B2B audience had shifted 60% toward AI platforms for research while their consumer audience remained 70% traditional search. This insight drove differentiated strategies by segment rather than blanket approaches.

Attribution Modeling for AI Search

Connect AI metrics to business outcomes through systematic attribution:

First-Touch Attribution

- Track users whose first interaction is AI-driven brand search

- Measure pipeline from “discovered via AI” cohorts

- Calculate CAC for AI-attributed customers

Multi-Touch Attribution

- Identify AI’s role in customer journeys (awareness vs. consideration vs. decision)

- Weight AI touchpoints appropriately in attribution models

- Measure assist rates where AI contributes but doesn’t drive direct conversion

Incrementality Testing

- Compare conversion rates of AI-aware vs. unaware cohorts

- A/B test marketing to segments with/without AI visibility

- Measure sales cycle differences for AI-influenced prospects

According to SEMrush research, companies connecting AI citations to revenue data make 2.7x more confident investment decisions than those tracking metrics in isolation.

Customer Journey Integration

Map AI touchpoints throughout buyer journeys:

Awareness Stage

- AI citations generating initial brand awareness

- Share of voice in problem/solution exploration queries

- Positioning in educational content responses

Consideration Stage

- Citations in comparison and evaluation queries

- Positioning when users research alternatives

- Presence in detailed feature/capability questions

Decision Stage

- Citations in pricing and implementation queries

- Positioning in “best [solution] for [use case]” queries

- Presence when users seek final validation

Track conversion rates and velocity by journey stage where AI played a role. This reveals where AI drives most impact, informing content strategy and resource allocation.

Platform-Specific Framework Adaptations

ChatGPT Measurement Protocols

ChatGPT’s variability requires adapted AI tracking systems approaches:

Testing Standardization

- Always use web browsing mode when available

- Document whether browsing was enabled in data

- Test from consistent accounts (premium vs. free affects results)

- Record conversation history effects on citations

Citation Detection

- Explicit footnoted references (when browsing enabled)

- Implicit influence through unique phrasing/frameworks

- Recommendation language analysis (“I recommend” vs. “you might consider”)

Quality Assessment

- Accuracy validation (ChatGPT synthesis sometimes mangles nuance)

- Framing analysis (positive authority vs. neutral mention)

- Competitive positioning when multiple sources cited

ChatGPT’s training data cutoff and inconsistent citation behavior make it the hardest platform to track reliably. Focus on directional trends over absolute precision.

Perplexity Measurement Protocols

Perplexity’s transparent citation system enables the most rigorous tracking:

Citation Capture

- Numbered source positions [1], [2], [3] easily documented

- In-text citation frequency (how many times each source cited)

- Follow-up question persistence tracking

Quality Metrics

- Click-through estimation based on position research

- Source diversity (sole source vs. one of many)

- Recency advantage tracking (fresh content performance)

Competitive Analysis

- Head-to-head positioning vs. specific competitors

- Share of voice across query sets

- Category-specific dominance patterns

Perplexity should be your primary platform for establishing baseline measurement protocols before expanding to others, connecting to your broader AI search visibility tracking strategy.

Google AI Overviews Measurement Protocols

Google’s hybrid AI + traditional search creates unique measurement challenges:

AI Overview Trigger Tracking

- Which queries generate AI Overviews (constantly evolving)

- Your presence in triggered overviews vs. absence

- Position within overview source lists

Traditional SERP Integration

- Dual presence (in overview + organic results)

- Traffic attribution (clicks from overview vs. organic)

- Impression share including AI and traditional components

Evolution Monitoring

- Google rapidly iterates AI Overview formats and triggers

- Weekly monitoring for significant changes

- Platform communication monitoring for announced updates

Google’s AI features change fastest among major platforms. Budget additional resources for adaptation and validation.

Real-World Framework Implementation

Case Study: $500M SaaS Company

A marketing automation platform built comprehensive AI search measurement frameworks over 18 months:

Phase 1 (Months 1-3): Foundation

- Manual tracking: 30 queries, 2 platforms (ChatGPT, Perplexity)

- Basic metrics: citation frequency, rough positioning

- Resource: 1 person, 5 hours/week, spreadsheets

- Key learning: Perplexity citations correlated strongly with demo requests

Phase 2 (Months 4-9): Systematization

- Semi-automated: 75 queries, 3 platforms (added Google AI)

- Structured metrics: SOV, ACP, quality scores, competitive benchmarking

- Resource: 2 people, 12 hours/week, custom scripts + databases

- Key learning: Competitor A dominated “integration” queries despite inferior product

Phase 3 (Months 10-15): Sophistication

- Fully automated: 200 queries, 4 platforms (added Claude tracking)

- Advanced metrics: attribution modeling, predictive forecasting, ROI analysis

- Resource: 3-person team, enterprise platform ($12,000/year), BI integration

- Key learning: AI-influenced deals closed 26 days faster with 34% higher ACV

Phase 4 (Months 16-18): Optimization

- Real-time: 350+ queries, emerging platform monitoring

- AI-driven insights: ML recommendations, automated content prioritization

- Resource: 4-person team, custom solutions, executive dashboard

- Key learning: AI framework insights drove 32% of content strategy decisions

Business Impact: Attribution modeling showed AI search contributed to 41% of enterprise pipeline. Share of voice increased from 18% to 39%. AI-influenced deals showed 23% higher win rates and 18% higher customer LTV.

Framework ROI: $2.8M in measurable pipeline impact versus $380K in framework investment (people + technology) = 7.4x return.

Case Study: Healthcare Content Publisher

A medical information site serving 40M annual visitors built frameworks focused on citation quality:

Initial State: Ad hoc tracking, 15 queries manually checked monthly, no competitive intelligence, no business integration.

6-Month Framework Build:

- Systematized tracking: 120 health-related queries across 3 platforms

- Quality emphasis: accuracy scoring, medical expertise signals, HIPAA compliance mentions

- Physician partnerships: tracking how MD credentials affected positioning

- Patient journey mapping: connecting citations to different health research stages

12-Month Results:

- Citation accuracy improved from 67% to 94% through quality focus

- Average position improved from 3.8 to 2.2 as medical credentials strengthened authority

- Share of voice increased from 12% to 38% in core health topics

Business Impact: Direct traffic increased 89% (attributed to brand awareness from AI citations). Advertising revenue jumped 156% as health brands perceived them as more authoritative. Framework enabled $4.2M revenue increase traceable to improved AI positioning.

Common Framework Building Mistakes

Over-Engineering Before Validation

The biggest mistake: building sophisticated frameworks before validating what matters.

Companies invest 6 months developing comprehensive tracking systems for 500 queries across 6 platforms before confirming which metrics actually correlate with business outcomes.

Start simple. Track 20-30 queries manually for 90 days. Validate metrics-to-business correlation. Then systematize what proves valuable.

Premature sophistication wastes resources and delays learning.

Tracking Without Acting

Measurement frameworks that don’t drive decisions are expensive dashboards nobody uses.

Build decision rules INTO your framework from day one. Define explicit thresholds triggering specific actions. Connect measurement to resource allocation, content prioritization, and strategic planning.

If six months of tracking hasn’t changed a single strategic decision, your framework has failed regardless of sophistication.

Ignoring Data Quality

Sophisticated frameworks built on unreliable data produce confidently wrong insights.

Invest heavily in data quality from the start:

- Validation protocols comparing automated vs. manual collection

- Inter-rater reliability testing for qualitative metrics

- Regular audits catching collection errors early

- Documentation of platform changes affecting measurement

Bad data kills frameworks faster than missing features.

Building in Isolation

Frameworks developed without stakeholder input produce metrics nobody cares about.

Interview executives, sales leaders, product managers, and customer success before designing frameworks. What decisions do they need to make? What questions keep them awake? What would make them confident investing more in AI search?

Build frameworks that answer your organization’s specific strategic questions, not generic “best practices” from the internet.

Tools and Technologies for Framework Implementation

Spreadsheet-Based Frameworks (Months 1-6)

Tools: Google Sheets, Excel, Airtable Capabilities: Manual data entry, basic calculations, simple visualizations Cost: Free to $20/month Best For: Foundation-stage frameworks validating metrics

Start here. Prove value before investing in sophisticated infrastructure.

Semi-Automated Frameworks (Months 6-15)

Tools: Python/JavaScript scripts, Selenium/Puppeteer, PostgreSQL/MySQL databases Capabilities: Automated collection, structured storage, programmatic analysis Cost: $200-1,000/month (tools + developer time) Best For: Systematization-stage frameworks scaling beyond manual limits

Requires technical resources but provides significant efficiency gains.

Enterprise Framework Platforms (Months 15+)

Tools: BrightEdge Generative Parser, Authoritas, custom BI integrations Capabilities: Real-time tracking, competitive intelligence, executive dashboards, attribution modeling Cost: $10,000-50,000+ annually Best For: Sophistication and optimization stages with executive commitment

Only invest after proving framework ROI and securing organizational buy-in.

Pro Tips for Framework Success

Start Simple Philosophy: “Every successful enterprise framework I’ve seen started with a 20-query spreadsheet tracked manually for 90 days. Complexity comes after validation, not before. Most framework failures come from over-engineering before proving value.” – Rand Fishkin, SparkToro Founder

Business Integration Imperative: “Your framework succeeds or fails based on one question: Does it change strategic decisions? If executives review your metrics and then make the same choices they would have made without them, you’ve built an expensive dashboard, not a strategic framework.” – Avinash Kaushik, Google Analytics Evangelist

Quality Over Quantity: “Companies tracking 500 queries badly get less strategic value than those tracking 50 queries excellently. Framework quality—data reliability, business integration, decision utility—matters infinitely more than scope. Perfect 50 queries, then scale.” – Lily Ray, SEO Director at Amsive Digital

FAQ

How long does it take to build an effective AI search measurement framework?

Plan 12-18 months for comprehensive frameworks. Foundation stage (months 1-3) establishes basics. Systematization (months 4-9) creates repeatable processes. Sophistication (months 10-18) adds advanced capabilities. Don’t rush—frameworks built too quickly lack validation and organizational buy-in. Better to have a simple framework that drives decisions than a sophisticated one nobody uses.

What’s the minimum viable framework for getting started?

Track 20-30 high-value queries manually across 2 platforms (Perplexity + ChatGPT or Google AI) monthly. Measure citation frequency, average position, and basic quality scores. Track your top competitor for context. Document in spreadsheets. Resource: 4-6 hours monthly. This provides enough data for initial strategic insights while validating which metrics matter before expanding.

Should I build frameworks in-house or use enterprise platforms?

Start in-house with manual/semi-automated approaches for 6-12 months. Validate metrics, prove ROI, and build organizational understanding. Then evaluate enterprise platforms based on your specific needs and scale. Platforms work best when you know exactly what you need—buying tools before that understanding wastes money and creates shelfware.

How do I get executive buy-in for framework investment?

Start with minimal investment foundation frameworks generating preliminary insights. Present business impact evidence (correlation between AI metrics and pipeline/revenue). Show competitive intelligence revealing strategic threats. Quantify opportunity cost of not measuring. Frame as risk management (competitors ARE tracking) and competitive advantage. Most executives fund proven value faster than theoretical benefits.

What metrics should every framework include?

Primary: citation frequency rate, share of voice, average citation position, citation quality score. Secondary: query coverage, competitive win rate, multi-platform presence. Business: brand search volume changes, AI-attributed pipeline contribution. These core metrics work across industries and business models. Add industry-specific metrics only after mastering the fundamentals.

How do I maintain framework quality as I scale?

Implement systematic validation: monthly spot-checks (automated vs. manual), quarterly inter-rater reliability tests, continuous monitoring for platform changes. Document everything—collection protocols, quality standards, decision rules. Train team members on frameworks. Review and refine quarterly. Quality maintenance requires dedicated effort; budget 15-20% of framework time for validation and refinement.

Final Thoughts

AI search measurement frameworks aren’t just measurement infrastructure—they’re strategic capability that separates reactive organizations from those shaping AI search outcomes proactively.

The companies dominating AI search visibility three years from now will be those that built comprehensive frameworks today. They’ll have years of baseline data, proven optimization playbooks, and deeply embedded measurement driving strategy.

The companies struggling will be those that waited until measurement became “easier” or “standardized”—when everyone’s competing for the same advantages and best practices are commoditized.

Your framework doesn’t need perfection on day one. It needs to exist on day one, then systematically improve through use and validation.

Start building now. Start simple. Validate relentlessly. Scale deliberately. Your framework maturity correlates directly with your AI search competitive advantage.

The measurement infrastructure you build today becomes your strategic moat tomorrow.

Citations and Sources

- Gartner – Generative AI Adoption and Measurement Gap Research

- BrightEdge – Framework Impact on Strategic Decision Velocity

- SEMrush – Attribution Modeling and Investment Confidence

- Authoritas – AI Search Measurement and Performance Tracking

- SparkToro – Search Behavior and Measurement Best Practices

- Kaushik.net – Analytics Framework Design and Implementation

Related posts:

- AI Search Visibility Tracking: Tools, Metrics & KPIs for Generative Engine Performance (Visualization)

- What is AI Search Visibility? Understanding Presence in Generative Engines

- Real-Time AI Search Monitoring: Tracking Citation Changes & Updates

- Query-Level AI Analytics: Tracking Visibility by Specific Search Terms