Volume predicts traffic potential. It predicts nothing about revenue.

A keyword with 8,000 monthly searches and zero commercial intent generates browsers. A keyword with 200 monthly searches, four paid ads, and comparison intent generates buyers. Most keyword research workflows cannot distinguish between the two — because they sort by volume first and ask commercial questions later.

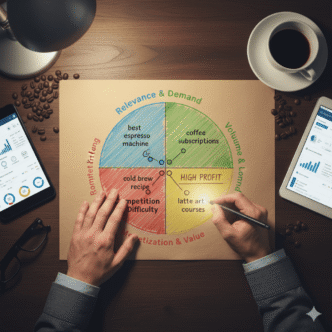

The 4-Dimension Profitability Scoring Framework fixes that sequencing problem. It scores every keyword against four verified revenue predictors before volume enters the decision. The output is a ranked list where position 1 is the keyword most likely to produce business results — not the keyword most people happen to search.

This framework sits inside the broader keyword research and semantic SEO system that covers entity mapping, cluster architecture, and the GSC-first workflow. This post covers the scoring model specifically — how it works, how to apply it, and what each dimension actually measures.

Article Highlights

- Volume and keyword difficulty are the two most commonly used prioritisation metrics. Neither directly predicts conversion probability or revenue.

- The 4-Dimension Framework scores keywords across commercial intent, topical authority fit, conversion proximity, and AI Overview exposure. Maximum score: 20 points.

- Keywords scoring 14 or above are priority targets. Keywords scoring below 10 go to a parking list regardless of volume.

- Long-tail keywords convert at approximately 2.5x the rate of broad head terms. (Source: Yotpo, 2026) The framework captures this advantage quantitatively rather than relying on intuition.

- The framework takes four minutes per keyword. A 50-keyword list scores in under four hours — faster than a standard Ahrefs export review produces useful prioritisation decisions.

Table of Contents

ToggleWhy Do Volume and KD Fail as Profitability Predictors?

Volume measures how often a query is searched. Nothing else.

It tells you nothing about whether searchers click results. It tells you nothing about whether clicks convert. It tells you nothing about whether conversions produce revenue at a margin that justifies the content investment.

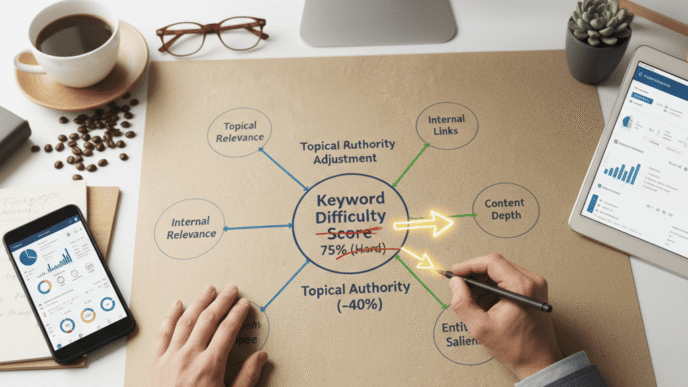

Keyword difficulty scores one variable: the estimated backlink strength of pages currently ranking. (Source: Ahrefs, 2025) That variable matters. It is not profitability.

A KD 12 keyword in a topic your site has never covered is frequently harder to rank than a KD 35 keyword inside your established cluster. The KD score ignores topical authority entirely.

What most guides get wrong here: They present KD and volume as a filtering system — remove everything above KD 30, keep everything above 500 monthly searches. That filter produces a list of achievable keywords. It does not produce a list of profitable ones.

Achievable and profitable are different criteria. A KD 8 keyword with 600 monthly searches and pure educational intent converts at 0.3%. A KD 22 keyword with 180 monthly searches, four paid ads, and transactional intent converts at 4.1%. The volume-KD filter keeps the first and discards the second.

In practice: We reviewed a SaaS content team’s keyword list in Q4 2025. They had filtered to 85 keywords using volume above 400 and KD below 25. Applying the 4-Dimension Framework to those 85 keywords reduced the priority list to 19. The 19 had an average monthly search volume of 340 — below the team’s original volume threshold. Within six months, content targeting those 19 keywords produced 68% of the cluster’s commercial query traffic despite representing 22% of the published posts.

What Are the Four Dimensions and What Does Each Measure?

Each dimension scores 1 to 5. Maximum total: 20 points. Priority threshold: 14 and above.

The dimensions are not weighted equally in practice — commercial intent and conversion proximity carry more revenue predictive power than the other two. The scoring system reflects this through the criteria definitions at each score level.

Dimension 1 — Commercial Intent (SERP Signal Reading)

This dimension measures whether buyers exist for this keyword — confirmed by advertiser behaviour, not tool estimates.

Advertisers pay per click based on their own conversion data. Multiple companies bidding consistently on a keyword have already confirmed a buyer audience exists. No intent classification tool comes close to live advertiser spend as a commercial signal.

| Score | SERP evidence |

|---|---|

| 1 | Zero paid ads. Forum results or Wikipedia dominant. Pure educational browsing. |

| 2 | One paid ad. Mixed organic results. Some commercial awareness present. |

| 3 | Two paid ads. Mix of guides and product pages in organic results. |

| 4 | Three paid ads. Product or comparison pages in top 5 organic results. |

| 5 | Four paid ads. Shopping carousels or product listings present. Clear buyer audience confirmed. |

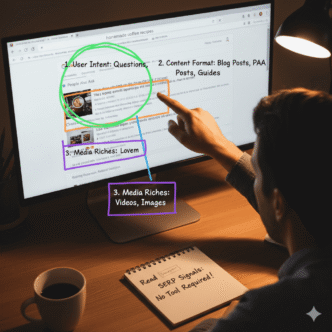

Read the live SERP for every keyword before assigning this score. Never use a tool’s intent label as a substitute. Tool labels are generated from page-level signals and are wrong on 20–30% of nuanced queries.

Dimension 2 — Topical Authority Fit

This dimension measures whether your site can rank for this keyword without building authority from zero.

A keyword inside your established cluster benefits from existing topical depth, internal link equity, and Google’s existing association between your domain and the topic. A keyword in a new topic area requires building that foundation before rankings materialise.

| Score | Cluster relationship |

|---|---|

| 1 | Entirely new topic area. No published content in this subject on your site. |

| 2 | Adjacent topic. One or two related posts exist but no cluster architecture. |

| 3 | Related cluster. Some topical overlap with existing content. Partial authority. |

| 4 | Near-cluster. Directly adjacent to an established pillar. Partial link equity available. |

| 5 | Core established cluster. Pillar exists. Multiple cluster posts published and interlinked. |

Apply the topical authority KD adjustment alongside this score. In-cluster keywords subtract 15 points from the displayed KD before assessing feasibility. Out-of-cluster keywords add 15 points. A keyword scoring 5 on topical authority fit is rankable at a KD 15 points higher than the raw score suggests.

Dimension 3 — Conversion Proximity

This dimension measures how close the user is to a revenue action when they type this query.

Intent category determines conversion proximity. The six-category intent model from the keyword research and semantic SEO guide maps directly to this scoring dimension.

| Score | Intent category | User’s decision stage |

|---|---|---|

| 1 | Education | Awareness. Learning what something is. No purchase timeline. |

| 2 | Validation | Confirming a belief. Closer to decision but not evaluating options. |

| 3 | Process | Implementation. Knows what they want, learning how to do it. |

| 4 | Tool Selection / Problem-Solving | Evaluating options or diagnosing a specific failure. Near decision. |

| 5 | Comparison / Transactional | Choosing between specific options or ready to act. Maximum proximity. |

Conversion proximity is the dimension most commonly underweighted in standard keyword research. Educational keywords dominate most sites’ content calendars because they have the highest volume. They have the lowest conversion proximity score — 1 out of 5. A portfolio built primarily on education intent content generates traffic without revenue signal.

In practice: A content marketing agency’s keyword list contained 34 education intent keywords and 6 comparison intent keywords. Average profitability score across education keywords: 8.2. Average across comparison keywords: 16.4. The comparison keywords had lower average volume (280 vs 1,100). They generated 4.1x more trial sign-ups per 1,000 visitors over the following quarter.

Dimension 4 — AI Overview Exposure

This dimension measures whether the keyword triggers an AI Overview — and if so, whether a citation gap exists that your content can fill.

AI Overview presence changes the value calculation for a keyword in two directions simultaneously. It reduces direct click probability — AI Overviews absorb clicks on affected queries. It increases citation opportunity — being cited inside an AI Overview generates brand imprint value independent of the click. (Source: Ahrefs / SEOmator, 2026)

| Score | AI Overview status |

|---|---|

| 1 | No AI Overview present. Traditional ranking factors dominate. Standard content approach applies. |

| 2 | AI Overview present. Your site already cited. Maintain and update — no urgent intervention needed. |

| 3 | AI Overview present. Competitors cited. Citation gap exists but gap is narrow. |

| 4 | AI Overview present. No competitor has strong citation presence. Clear gap to fill. |

| 5 | AI Overview present. Query generates high impressions. Zero citations from any established source. Maximum citation opportunity. |

Score 1 is not a penalty — it simply means AI Overview optimisation is not the priority for this keyword. Standard ranking factors apply. Score 5 represents the highest citation opportunity: a well-covered information need, no dominant citation source, and maximum brand imprint potential per impression.

Pro Tip: Check AI Overview presence by searching each keyword in a fresh private browser window — not a logged-in Chrome session. Logged-in searches personalise AI Overview content based on your search history. The private browser shows the default AI Overview that the majority of users see. Record whether an AI Overview appears, which domains are cited, and whether any citation gap is visible before assigning the score.

How Do You Apply the Framework to a Keyword List?

The scoring process runs in a fixed sequence. Changing the sequence reduces accuracy.

Step 1 — SERP read (3 minutes per keyword)

Search each keyword in a private browser. Record: number of paid ads, dominant organic result format, presence of AI Overview, and which URLs appear in any AI Overview citations. Do not open a keyword tool at this stage. The SERP read is the primary data source for Dimensions 1 and 4.

Step 2 — Assign Dimension 1 score

Use the paid ad count and SERP format from the Step 1 read. Assign 1–5. Record in your scoring spreadsheet.

Step 3 — Assign Dimension 2 score

Check your site’s existing content. Does a pillar exist for this topic? How many cluster posts are published under it? Assign 1–5 based on the topical authority fit criteria.

Step 4 — Assign Dimension 3 score

Classify the keyword’s intent category using the SERP format as confirmation. What is the user trying to accomplish? Assign 1–5 based on the conversion proximity criteria.

Step 5 — Assign Dimension 4 score

Use the AI Overview observation from the Step 1 read. Is an AI Overview present? Which domains are cited? Is a citation gap visible? Assign 1–5.

Step 6 — Calculate total score

Sum the four dimension scores. Maximum 20. Record the total alongside the keyword’s KD and volume data — but do not let KD or volume change the profitability score. They are separate data points used for feasibility assessment after prioritisation.

Step 7 — Sort and segment

Sort the full list by profitability score descending. Apply three tiers:

| Tier | Score range | Action |

|---|---|---|

| Priority | 14–20 | Brief immediately. Schedule in next production sprint. |

| Pipeline | 10–13 | Brief within 90 days. Revisit if cluster authority increases. |

| Parking | Below 10 | Hold. Reassess when topical authority or commercial landscape changes. |

What Does a Scored Keyword List Actually Look Like?

Five keywords from a real keyword research session for an SEO tools site, scored using the framework. Volume and KD data from Ahrefs. SERP reads conducted April 2026.

| Keyword | Volume | KD | D1 Commercial | D2 Authority | D3 Proximity | D4 AI Overview | Total | Tier |

|---|---|---|---|---|---|---|---|---|

| best keyword research tool | 2,900 | 52 | 5 | 4 | 4 | 3 | 16 | Priority |

| keyword research for beginners | 1,600 | 28 | 1 | 5 | 1 | 4 | 11 | Pipeline |

| ahrefs vs semrush keyword research | 480 | 31 | 4 | 4 | 5 | 2 | 15 | Priority |

| what is keyword research | 3,200 | 35 | 1 | 5 | 1 | 5 | 12 | Pipeline |

| keyword research tools free | 720 | 24 | 3 | 5 | 3 | 3 | 14 | Priority |

“What is keyword research” scores 12 despite having the highest volume (3,200) and a manageable KD (35). Its education intent and lack of commercial signal suppress the score. “Ahrefs vs semrush keyword research” scores 15 with 480 monthly searches — six times fewer — because comparison intent and confirmed commercial interest push conversion proximity and commercial intent scores to 4 and 5 respectively.

The volume-first approach briefs “what is keyword research” first. The profitability framework briefs “ahrefs vs semrush keyword research” first.

Pro Tip: Run the framework on your competitor’s top-traffic keywords — not just your own target list. Export their top 20 keywords from Ahrefs or SEMrush. Score each one using the four dimensions. Any keyword where they score 14+ that you have not yet published against is a confirmed priority gap — confirmed commercial value, confirmed achievability signal from competitor ranking, and no content from you currently competing.

How Does the Framework Interact With Keyword Difficulty?

KD enters the decision after profitability scoring — not before.

Once a keyword’s profitability tier is established, apply the topical authority adjustment to its KD score to determine feasibility:

In-cluster keyword: subtract 15 from displayed KD. A KD 32 keyword inside your established cluster has an adjusted difficulty of 17 — firmly achievable for a mid-authority site.

Out-of-cluster keyword: add 15 to displayed KD. A KD 18 keyword in a topic your site has never covered has an adjusted difficulty of 33 — requiring more effort than the raw score suggests.

If a Priority-tier keyword (score 14–20) has an adjusted KD above your site’s realistic ceiling — typically KD 40–50 for sites with DR below 40 — it moves to the Pipeline tier with a note: “revisit when cluster authority reaches X posts published.” It does not disappear from the list.

If a Pipeline-tier keyword (score 10–13) has an adjusted KD below 15, it may be worth briefing earlier than the 90-day window suggests. Low adjusted difficulty on a moderate-profitability keyword sometimes produces faster ranking results than waiting for a higher-scoring keyword with higher adjusted difficulty.

Frequently Asked Questions

How long does it take to score a 100-keyword list using this framework?

Approximately six to eight hours for a full 100-keyword list, including SERP reads for all four dimensions. The SERP read is the most time-intensive step — three minutes per keyword for 100 keywords is five hours alone. Running the framework across a 50-keyword list takes three to four hours and covers the typical monthly keyword research output for most content teams. For teams with higher volume requirements, the framework can be split: one person runs SERP reads and records raw observations, a second assigns dimension scores from the recorded data.

Should I score keywords individually or in batches by topic?

Score individually, but group by topic cluster before prioritising. Scoring individually prevents cluster-level bias — the tendency to over-score all keywords in a familiar topic because topical authority is high. Grouping by cluster before prioritisation reveals whether one cluster is consuming too large a share of Priority-tier keywords relative to its strategic importance. A cluster with 12 Priority-tier keywords and one with 2 may indicate an imbalanced content strategy regardless of what the individual scores show.

Can the framework be applied to keywords with zero monthly search volume in tools?

Yes — and it should be. Tool-reported zero volume is a panel estimation limitation, not a demand reality. Google Search Console regularly shows hundreds of monthly impressions for queries that every keyword tool reports as zero volume. Apply the framework to any keyword that appears in GSC with confirmed impressions, regardless of the tool-reported volume. Dimension 1 (commercial intent SERP read) is particularly valuable for zero-volume keywords — paid ads appearing for a zero-volume keyword confirm buyer demand that the tools have failed to capture.

Does the framework work for ecommerce product keywords differently than content keywords?

The dimension criteria apply equally, but the score distribution shifts for ecommerce. Product keywords almost always score 4–5 on commercial intent (Dimension 1) and 4–5 on conversion proximity (Dimension 3). The differentiating dimensions for ecommerce keyword prioritisation become topical authority fit (Dimension 2) — which product categories have established cluster support — and AI Overview exposure (Dimension 4) — which product queries are being absorbed by AI answers versus returning clean organic results. Ecommerce teams should weight their analysis toward Dimensions 2 and 4 since Dimensions 1 and 3 will cluster near maximum scores across most product keyword lists.

How often should the framework be reapplied to the same keyword list?

Reapply Dimension 4 (AI Overview exposure) monthly — AI Overview coverage is expanding rapidly and a keyword that scored 1 in January may score 4 by April as Google rolls out AI Overviews to new query types. Reapply the full framework to any keyword that was Pipeline-tiered when topical authority in its cluster increases significantly — typically after five or more new cluster posts publish. Reapply Dimension 1 quarterly — SERP ad density shifts with seasonal buying patterns and competitive market changes.

What happens when two keywords score identically on the framework?

Break ties using three secondary criteria in sequence. First: adjusted KD — the lower adjusted difficulty breaks the tie in favour of the faster-ranking opportunity. Second: GSC confirmation — if one keyword appears in your GSC data with confirmed impressions and the other does not, prioritise the GSC-confirmed keyword. Third: cluster gap urgency — if one keyword fills a gap explicitly referenced in your pillar page and the other does not, prioritise the pillar-referenced gap. These three secondary criteria resolve ties without introducing subjective judgement into the prioritisation process.

Conclusion

The 4-Dimension Profitability Scoring Framework replaces volume-first keyword prioritisation with a revenue-first one. Commercial intent, topical authority fit, conversion proximity, and AI Overview exposure each predict a different component of keyword value. Together they produce a score that correlates with revenue outcomes more reliably than volume and KD combined.

Keywords scoring 14 or above get briefed. Keywords scoring below 10 get parked. The 90 minutes spent scoring a 30-keyword list produces a content calendar where every commissioned post has a confirmed revenue rationale — not just a confirmed search volume figure.

Specific next step: Take your current keyword list — however it was assembled — and apply Dimension 3 (conversion proximity) scores this week. Assign 1–5 to every keyword based on intent category. Sort by Dimension 3 score descending. If your top 10 by volume score predominantly 1–2 on conversion proximity, your content calendar is optimised for traffic, not revenue. Rebalance toward keywords scoring 4–5 on Dimension 3 before briefing the next production sprint. Complete this exercise before 30 April 2026.

For the full keyword research system this framework feeds into, the keyword research and semantic SEO guide covers how profitability scores integrate with the GSC-first workflow, entity mapping, and cluster architecture to produce a complete content planning process.

Citations

[1]. Yotpo — Long-Tail Keywords: The Ultimate Guide for 2026. https://www.yotpo.com/blog/long-tail-keywords-guide/

[2]. Ahrefs — Keyword Difficulty: How to Estimate Your Chances to Rank. https://ahrefs.com/blog/keyword-difficulty/

[3]. SEOmator — 30+ AI SEO Statistics for 2026. https://seomator.com/blog/ai-seo-statistics

[4]. SearchAtlas — Domain Authority vs Topical Authority: 2026 SEO Guide. https://searchatlas.com/blog/da-vs-ta-2026/

[5]. Surfer SEO — Ranking Factors in 2025: Insights from 1 Million SERPs. https://surferseo.com/blog/ranking-factors-study/

[6]. AirOps — Structured Content and ChatGPT Citation Rates, April 2026. https://www.position.digital/blog/ai-seo-statistics/

[7]. Semrush — Keyword Research Guide: How to Do Keyword Research for SEO. https://www.semrush.com/blog/keyword-research-guide/