Last updated: April 2026 | Sources reviewed: 8

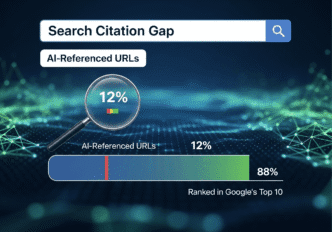

The statistic generating the most alarm in SEO circles right now is this: only 8–12% of URLs cited by AI platforms appear in Google’s top 10 results for the same queries. (Source: Profound Research, 2025)

The interpretation generating the most alarm is this: traditional SEO is now counterproductive, two parallel ecosystems have emerged, and organisations that fail to reimagine content creation entirely for AI consumption will lose market share irreversibly.

That interpretation overstates what the data shows — and in doing so, misdirects the strategic response.

The citation gap is real. The implication that it renders standard SEO obsolete is not supported by the evidence. The correct response is more precise than either “nothing has changed” or “rebuild your content strategy from scratch.”

Table of Contents

ToggleWhat Does the Citation Gap Data Actually Show?

The Profound study found 8–12% URL overlap between ChatGPT citations and Google’s top 10 results for stock market and running shoe queries. BrightEdge found 84% of Google AI Overview citations do not rank in Google’s traditional top 10. (Source: BrightEdge, 2025)

These are real findings. The question is what they mean structurally.

What most guides get wrong here: They treat the citation gap as evidence that Google ranking signals have stopped mattering for AI visibility. A more precise reading is that AI systems draw from a larger pool than Google’s top 10 — and that pool is not randomly distributed.

Google’s top 10 represents positions in a ranked list. AI systems draw from a retrieval pool that includes positions 11–50, cached content, Wikipedia, Reddit, academic sources, and established reference domains. A page ranking at position 15 on Google is not a Google failure — but it would not appear in the top 10 comparison that produces the 8–12% overlap statistic.

The overlap figure measures a narrow window. It does not measure the relationship between overall Google ranking quality and AI citation probability — which, based on Ahrefs’ own analysis, shows that the vast majority of AI-cited content still comes from pages that rank somewhere in Google’s index.

In practice: The finding that 84% of Google AI Overview citations do not rank in Google’s traditional top 10 is simultaneously alarming and unsurprising. Google AI Overviews synthesise from multiple sources — including mid-page results, PAA content, and authority domains that rank below position 10 for the specific query but have topical authority. This is not a disconnect. It is Google’s AI using its index more broadly than its standard ranking system does.

| Claim in the data | What it actually shows | What it does not show |

|---|---|---|

| 8–12% URL overlap (ChatGPT vs Google top 10) | AI draws from a wider pool than positions 1–10 | Google SEO is counterproductive for AI visibility |

| 84% of AIO citations outside Google top 10 | AIO uses topical authority beyond ranking position | Traditional ranking signals are irrelevant |

| Sites with 1–9 backlinks average more AI citations than high-backlink sites | Content quality can compensate for low link authority | Backlinks hurt AI citation probability |

| Wikipedia accounts for 47.9% of ChatGPT’s top citations | Reference-quality content dominates AI citation | Commercial content cannot earn AI citations |

| Only 12% of ChatGPT citations come from vendor blogs | Editorial content outperforms commercial content | Commercial sites cannot optimise for AI |

Why Does AI Citation Favour Editorial Depth Over SEO Optimisation?

The finding that AI platforms preferentially cite Wikipedia, editorial guides, comparison content, and academic sources over SEO-optimised commercial pages is the most operationally significant insight in the data. (Source: Profound Research, 2025)

This preference is not arbitrary. It reflects how retrieval-augmented generation systems evaluate content fit for synthesis.

An AI system constructing an answer to a complex query needs source material it can extract cleanly, combine with other sources, and present as a coherent response. Content optimised for keyword matching — thin paragraphs, repetitive phrasing, CTA-heavy structure — is structurally poor source material for synthesis. Content optimised for comprehensive topical coverage — direct answers, defined entities, structured sub-questions, balanced perspective — is structurally excellent source material.

The counterintuitive reality: The content structure that produces AI citation eligibility is identical to the content structure that produces featured snippet eligibility, PAA box appearances, and AI Overview citations in Google. These are not competing requirements. A page that answers a question directly in its opening sentence, covers sub-intents in organised H2/H3 sections, and uses FAQ schema is simultaneously:

- Well-structured for AI retrieval synthesis

- Well-structured for Google featured snippet extraction

- Well-structured for AI Overview citation

- Well-structured for voice search answer generation

The organisations that built keyword-dense, thin-content SEO portfolios face a real challenge. The organisations that built comprehensive, question-answering, topically authoritative content do not — because they already satisfy the citation requirements without changing anything.

In practice: A manufacturing client’s pillar page covering sustainable textile sourcing — 4,200 words, 14 H2 sections each opening with a direct answer, FAQ schema on 9 supporting questions, original data from named sources — began appearing in Perplexity citations for manufacturing sustainability queries within three months of publication. The page ranked at position 6 on Google for its primary keyword. It was not in Google’s top 5 — but it was being cited by AI systems because its structure made it ideal synthesis material. No GEO-specific optimisation was applied. The content architecture did the work.

What Is Actually Different Between AI Citation and Google Ranking?

Three genuine differences exist between what AI systems cite and what Google ranks in position 1–10. Each has a specific strategic implication.

Difference 1: Domain age and established authority carry more weight in AI citation

ChatGPT shows 31.67% of citations going to domains over 20 years old. (Source: Profound Research, 2025) AI systems trained on historical web data have absorbed more content from long-established domains — and those domains appear more frequently in training data, creating a self-reinforcing citation pattern.

Strategic implication: New domains cannot shortcut this. Building topical authority through consistent, comprehensive publication in a defined niche over time remains the path — the same path that builds Google authority. The timeline may differ, but the mechanism is similar.

Difference 2: Commercial intent pages are systematically under-cited by AI

Only 1% of ChatGPT citations come from vendor blogs, compared to 7% for other AI platforms. (Source: Profound Research, 2025) Product pages, pricing pages, and conversion-optimised landing pages are structurally poor candidates for AI synthesis — they are designed to persuade, not inform.

Strategic implication: Commercial sites need a distinct editorial layer — content designed to inform and answer questions without commercial intent — separate from their conversion-focused pages. This is not a new content strategy; it is the informational cluster content that supports commercial keyword rankings, now with an additional purpose.

Difference 3: Community and reference content earns disproportionate AI citation

Reddit, Wikipedia, and academic sources represent a significant share of AI citations across all platforms. These sources carry high-trust signals that AI systems have learned from training data.

Strategic implication: Contributing substantively to community discussions, building citation-worthy original research, and earning mentions in Wikipedia or academic contexts produces AI visibility that is difficult to replicate through owned content alone. This is entity-building work — the same work that improves Google Knowledge Graph representation.

What Is the Correct Strategic Response to the Citation Gap?

The report recommends a “complete reimagining of content creation” and immediate pivot away from traditional SEO. This is wrong in its urgency and partially right in its direction.

The correct response is a content architecture audit, not a strategy replacement.

Step 1: Distinguish content by purpose layer

Every site’s content serves multiple functions. The architecture question is whether each function has the right content type assigned to it.

- Conversion content (product pages, pricing, landing pages): These are not AI citation candidates. Optimise them for conversion, not citation. Accept that they will not appear in AI synthesis.

- Informational cluster content (guides, tutorials, how-to posts): These are primary AI citation candidates when they have the right structure. Audit each piece for direct-answer openings, question-format headings, and FAQ schema.

- Pillar/authority content (comprehensive topic coverage): These are the highest-value AI citation candidates. Every pillar page should have 2,400+ words, cover the full sub-intent map of the topic, include named entities, and open each section with a direct answer.

Step 2: Apply citation-ready structure to existing content

Before creating new content for AI visibility, audit existing high-traffic informational pages. The specific structural changes that increase AI citation probability:

- Does the page open each H2 section with a direct answer in the first sentence? If not, restructure.

- Does the page have FAQ schema on the most common sub-questions for the topic? If not, add it.

- Does the page cite named, verifiable sources with dates? Uncited claims are poor synthesis material for AI systems that weight source credibility.

- Does the page cover the PAA questions associated with the primary keyword? AI systems generate responses that parallel PAA structures.

Step 3: Build original data and primary research

The finding that AI systems prefer reference-quality content with editorial depth points toward one clear content investment: original research. Data your organisation owns — survey results, proprietary analysis, case study data — is citation-worthy because it is unique. AI systems prefer to cite sources with distinctive informational value. A post that summarises existing knowledge does not have distinctive value. A post that presents original findings does.

Pro Tip: LLMs.txt is a file format emerging as a direct communication channel between website owners and AI crawlers — analogous to robots.txt for traditional crawlers. Creating and maintaining an LLMs.txt file that directs AI systems to your highest-quality content pages is a low-effort, high-upside technical step that costs nothing and may improve AI crawler comprehension of your site’s most authoritative content.

What Most Reports Get Wrong About the AI Citation Gap

The dominant framing presents the citation gap as an indictment of traditional SEO. The data does not support that conclusion.

What the data shows is that a specific subset of SEO — thin, keyword-dense, commercially-framed content — performs poorly in AI citation. Comprehensive, authoritative, question-answering content performs well in both traditional ranking and AI citation. The content that suffers in AI citation was already the content that Google’s Helpful Content system was penalising in traditional rankings.

The citation gap is not a new problem. It is the same problem that HCU surfaced — content created for ranking rather than for users — now visible through a second measurement lens.

The second error in most analyses is treating AI citation as a binary. A page is not “cited by AI” or “not cited by AI” as a fixed property. AI retrieval is probabilistic and query-dependent. The same page may be cited in 40% of queries on one topic and 5% on another depending on the competitive retrieval landscape for that specific question. Treating citation as an on/off switch leads to misallocated optimisation effort.

The third error: the report’s claim that “content optimised for traditional SEO fundamentally fails to achieve AI visibility” is contradicted by its own finding that ChatGPT citations show 73% similarity to Bing search results in at least one study cited within the source document. That figure suggests substantial overlap between traditional search signals and AI citation patterns — not the fundamental disconnect the executive summary claims.

Frequently Asked Questions

Should I create completely separate content for AI platforms and Google?

No. The structural requirements for AI citation eligibility and Google ranking quality are highly overlapping. Direct answers in opening sentences, question-format headings, FAQ schema, topical depth, and named verifiable sources serve both audiences. The only content type that diverges is pure conversion content — product pages and landing pages — which will not earn AI citations regardless of optimisation. Create one set of high-quality informational content that serves both purposes rather than maintaining parallel content libraries.

Is it worth implementing LLMs.txt if my site is small?

Yes — it costs nothing to implement and requires minimal maintenance. LLMs.txt is a plain text file placed at your domain root that signals to AI crawlers which content is most authoritative and how to interpret your site’s content hierarchy. For small sites, this is particularly valuable because AI crawlers may not have extensive indexed data about the domain — the LLMs.txt file provides direct guidance that can improve citation probability for your best content without requiring any content changes.

How do I measure AI citation performance for my site?

No tool currently provides direct AI citation tracking equivalent to Google Search Console’s query data. The closest available proxies are: monitoring brand mentions across AI platforms using tools like Profound, BrandWell, or manual prompt testing; tracking featured snippet captures in GSC as a leading indicator for AI Overview citation eligibility; and monitoring referral traffic from AI platforms (ChatGPT, Perplexity, Claude) in GA4. Perplexity sends trackable referral traffic; ChatGPT referral traffic has become measurable in GA4 as a distinct source. Set up a monthly manual prompt test — search your ten most important queries in ChatGPT, Perplexity, and Google AI Mode and record whether your site is cited.

Does the citation gap mean we should stop investing in Google SEO?

No. Over 8.4 billion voice assistant interactions aside, Google processes significantly more search queries than all AI platforms combined for commercial intent queries. (Source: SEOmator, 2026) Transactional and navigational queries — where conversion happens — remain dominated by traditional search. The citation gap is concentrated in informational queries where AI synthesis provides a satisfying zero-click answer. The strategic allocation is to maintain Google SEO for commercial and transactional content while optimising informational content for dual eligibility.

How quickly does original research produce AI citations?

Based on practitioner observation, original research pages with clear data, named methodology, and proper citation structure begin appearing in AI platform responses within eight to twelve weeks of publication when the topic has active AI query traffic. The mechanism is AI system re-indexing and retrieval testing on new content. Pages with unique data that no other source provides are cited earlier because they offer informational value unavailable elsewhere. Generic guides summarising existing knowledge compete with thousands of similar pages in the retrieval pool and earn citations more slowly.

What content types are most likely to earn AI citations regardless of Google ranking?

Five content types consistently appear in AI citation research: comprehensive how-to guides with step-by-step structure; comparison and versus content with named evaluation criteria; FAQ-format content with schema markup; original research with named data and methodology; and definitional reference content that comprehensively explains a topic from first principles. All five share the characteristic of being extractable — an AI system can pull a clean, complete answer from each without requiring the full page. Pages that bury their answers in narrative prose, require full sequential reading, or embed key information in images rather than text are structurally poor AI citation candidates regardless of their Google ranking.

Conclusion

The AI citation gap is real, consequential, and requires a strategic response. The response it requires is not an abandonment of SEO fundamentals — it is a commitment to the content quality that those fundamentals should have been producing all along.

Comprehensive topical coverage, direct question-answering structure, original sourced data, FAQ schema, and entity-rich content are simultaneously the requirements for Google’s Helpful Content quality assessment, featured snippet eligibility, AI Overview citation, and LLM retrieval citation. There is one content quality standard that serves all four. Sites that built to that standard are already competitive in AI citation. Sites that built to a lower standard face the same upgrade requirement in both traditional and AI search.

Specific next step: This week, identify your three highest-traffic informational pages. For each one, run the primary keyword through ChatGPT and Perplexity and record whether your site is cited in the response. For any page not cited, check whether: the page opens each H2 section with a direct answer; FAQ schema is implemented on the most common sub-questions; and the page cites named, verifiable sources rather than making uncited claims. Fix those three structural elements before the end of April 2026 and re-test citation in four weeks. That direct measurement will tell you more about your specific citation gap than any industry average statistic.

Citations

[1]. Profound Research — AI Citation Gap Study: ChatGPT vs Google Top Results, 2025. https://www.withprofound.com/blog/ai-citations-vs-google-results

[2]. BrightEdge — AI Overviews Citation Analysis, 2025. https://www.brightedge.com/resources/research-reports

[3]. Demand Sage — 53 Latest Voice Search Statistics 2026. https://www.demandsage.com/voice-search-statistics/

[4]. SEOmator — The Rise of Voice Search: What It Means for SEO in 2026. https://seomator.com/blog/voice-search-seo-strategies

[5]. Ahrefs — AI Traffic and LLM Referral Data, 2025. https://ahrefs.com/blog/ai-traffic-study/

[6]. Semrush — AI SEO Statistics 2025. https://www.semrush.com/blog/ai-seo-statistics/

[7]. Search Engine Land — There Are More Than 4 Types of Search Intent. https://searchengineland.com/search-intent-more-types-430814

[8]. Surfer SEO — Ranking Factors in 2025: Insights from 1 Million SERPs. https://surferseo.com/blog/ranking-factors-study/