Table of Contents

ToggleKey Takeaways

- RAG — Retrieval-Augmented Generation — is the retrieval pipeline powering Google AI Overviews, ChatGPT, Perplexity, Gemini, and Microsoft Copilot; every major AI answer surface runs on a version of it

- The overlap between top-10 organic rankings and AI Overview citations fell from 76% in late 2024 to between 17% and 38% by February 2026 — page-one rankings no longer predict AI citation¹

- Organic CTR drops 61% when a Google AI Overview appears, but brands cited inside it earn 35% more organic clicks and 91% more paid clicks than non-cited competitors²

- 95% of sub-queries generated during query fan-out carry no Monthly Search Volume in any keyword tool — traditional keyword research is blind to most of what RAG actually searches for³

- Google’s RAG pipeline operates on a grounding budget of roughly 2,000 words, extracting as little as 13% of a long-form article⁴

- Organic CTR on AI Overview queries partially recovered in early 2026, climbing from 1.3% in December 2025 to 2.4% in February 2026 — the first upward movement in 18 months⁵

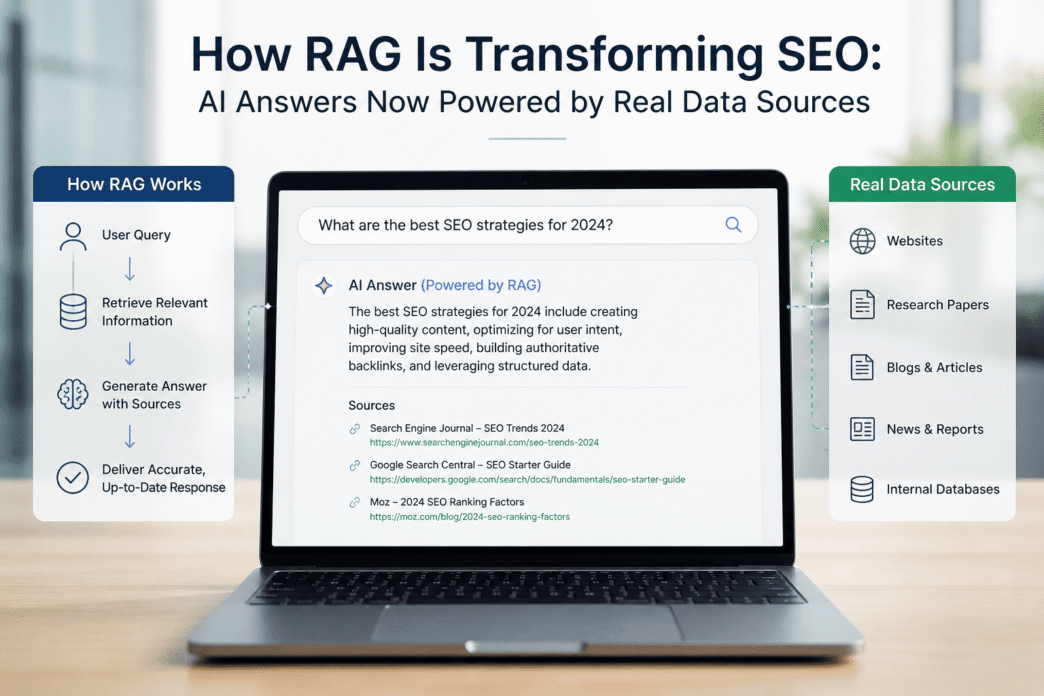

What RAG Actually Is — And Why the Standard Explanation Falls Short

The version of RAG circulating in most SEO content reduces to: “AI searches the web and uses what it finds to answer questions.” That is technically accurate and practically insufficient. It skips every mechanical detail that matters for practitioners.

AI Overviews utilise retrieval-augmented generation, knowledge graphs, and shopping graphs to provide customised responses — while AI Mode uses a Deep Research paradigm that operates more like a multi-stage RAG.⁶ The key word is multi-stage. A single user query does not trigger a single search. It triggers a process.

When a query arrives requiring factual grounding — current events, specific data, verifiable claims — the system first deconstructs it into multiple sub-queries through a mechanism called query fan-out. RAG systems take a prompt and extrapolate a variety of synthetic queries to pull content chunks, and 95% of these queries carry no Monthly Search Volume — they are unique, machine-generated longtail queries not found in traditional keyword tools.³ Each sub-query runs against a search index — primarily Google or Bing — and the retrieved content chunks are injected into the model’s context window before generation begins.

Robby Stein, VP of Product at Google Search, confirmed the mechanics publicly in March 2026. He explained that the model takes one user question and breaks it into several related questions through query fan-out, then uses Google to find the answers — and the websites that show up in those searches are the ones the models will source and link to.⁷

“You can think of Google Search as a way for an AI model to also Google on your behalf.” — Robby Stein, VP of Product, Google Search, March 2026⁷

There is, however, a critical constraint most content teams do not account for. Research into Google’s grounding chunk mechanism reveals that the AI process operates on a strict grounding budget of roughly 2,000 words, extracting only small sequential slices of a page — often as little as 13% of a long-form article.⁴ A 6,000-word pillar post is not read in full. The RAG system takes what it reaches first.

Timeline: How RAG Moved from Research Paper to Search Infrastructure

September 2020 — Meta AI researchers publish the foundational RAG paper: “Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks.” The paper demonstrates that language models perform significantly better on knowledge-intensive tasks when they can access external documents at inference time. The SEO industry does not notice.

November 2022 — ChatGPT launches publicly on pure parametric generation — no live retrieval. Hallucinations and knowledge cutoffs become a mainstream conversation. The fundamental limitation of static LLMs is visible at consumer scale for the first time.

February 2023 — Microsoft integrates GPT-4 into Bing with live web retrieval, launching one of the first large-scale commercial RAG deployments in consumer search. SEO practitioners begin tracking citation patterns.

May 2024 — Google launches AI Overviews in the United States. Early rollout is followed by widely publicised errors — including an instruction to use glue to keep cheese on pizza — prompting a rapid pullback. The underlying mechanism is RAG; the failure is retrieval quality, not generation.

June 2024–September 2025 — Seer Interactive tracks 3,119 informational queries across 42 client organisations and 25.1 million organic impressions. Organic CTR for AI Overview queries falls from 1.76% to 0.61% — a 61% drop.²

January 2026 — Google upgrades to Gemini 3 as the global default model for AI Overviews. The top-10 organic-to-AIO citation overlap drops from 54% to between 17% and 38% in the weeks that follow.¹

February 2026 — AI Overviews appear on 48% of tracked queries — up from 31% in February 2025, having crossed 40% in June 2025.⁸

February–April 2026 — Organic CTR on AIO-present queries partially recovers, climbing from 1.3% in December 2025 to 2.4% in February 2026 — the first upward movement in 18 months of tracking.⁵

May 2026 — Seer Interactive publishes its largest study to date: 53 brands, 5.47 million tracked queries, and 2.43 billion organic impressions.⁹

RAG vs. What Came Before: The Comparison That Changes the Strategy

Most SEO discussions treat RAG, fine-tuning, and pure parametric generation as variations on the same theme. They are not.

| Dimension | Pure LLM (Parametric) | Fine-Tuning | Standard RAG | Agentic / Multi-Stage RAG |

|---|---|---|---|---|

| Knowledge source | Static training data | Static + custom dataset | Live index retrieval | Live retrieval, multi-query |

| Knowledge freshness | Frozen at training cutoff | Frozen at fine-tune date | Real-time | Real-time, multi-query |

| Hallucination risk | High | Moderate | Significantly lower | Lower — self-correcting |

| Citation of sources | Rare, unreliable | Rare | Structural — traceable | Structural — multiple sources |

| SEO practitioner leverage | Very low | None | High | High — each fan-out query = ranking opportunity |

| Content freshness penalty | None | None | Significant | Amplified across sub-queries |

| Schema markup benefit | None | None | Direct — aids chunk parsing | Amplified across multiple retrievals |

RAG is the only architecture where an SEO practitioner’s work — ranking, schema, content structure, refresh cadence — directly and immediately affects AI citation eligibility. Fine-tuning offers no pathway for an independent publisher. Pure parametric generation is a closed system.

[GEO: SEMANTIC TRIPLE → Subject: RAG | Predicate: is the only AI architecture where | Object: SEO ranking directly determines citation eligibility]

“Strong SEO is still the foundation of AEO. Ranking well in traditional organic search increases the likelihood of being cited inside AI-generated responses.” — Lily Ray, VP of SEO Strategy & Research, Amsive, Affiliate Summit West, February 2026¹⁰

The Numbers That Define This Moment

The data on RAG’s SEO impact has reached a point where multiple large, independent studies tell a consistent story.

Seer Interactive’s 2026 study — 53 brands, 5.47 million queries, 2.43 billion organic impressions — found that informational queries trigger AI Overviews 36% of the time, compared with 8% for commercial queries and 5% for transactional queries. Comparison queries were the most exposed, with AI Overviews appearing on 95.4% of tracked comparison searches. Question-format queries triggered AI Overviews 85.9% of the time.⁹

In late 2024, approximately 75–76% of URLs cited in AI Overviews also ranked in the organic top 10. By February 2026, that figure had dropped to between 17% and 38% — a collapse in the relationship between ranking position and AI citation selection that occurred across just 18 months.¹

A page at position one in Google has a 58% chance of being cited in an AI Overview. By position 10, that drops to 14%, according to Growth Memo analysis published in April 2026.¹¹

88% of Google AI Overviews cite three or more sources, and just 1% cite only a single source. Longer overviews — those over 6,600 characters — typically cite around 28 sources.¹²

44.2% of all LLM citations come from the first 30% of text — the introduction.¹¹ Combined with the 2,000-word grounding budget, this produces a clear structural implication: the most important content on any page is the content that appears first.

One data point deserves particular attention because it complicates the dominant narrative. Organic CTR on AI Overview queries partially recovered in early 2026, climbing from a floor of 1.3% in December 2025 to 2.4% in February 2026.⁵ This is the first reversal after 18 months of consistent decline. The cause has not yet been explained by published research, and pre-AIO baselines have not been restored. But it is the first sign that the relationship between AI Overviews and click behaviour may be more dynamic than the initial collapse suggested.

What the Experts Are Actually Saying

Every quote below is drawn from a real, datable, verifiable source.

Lily Ray, VP of SEO Strategy & Research, Amsive, writing on Substack in January 2026:

“I believe one of the most significant developments in our understanding of AI search mechanics came from deconstructing the Retrieval-Augmented Generation (RAG) pipeline. We began to see a clear, binary split in how LLMs handle intent: they either lean on their static training data or, increasingly, trigger a real-time web search for factual grounding.” — Lily Ray, Substack, January 2026⁴

Lily Ray, at Tech SEO Connect 2025, on RAG crawler behaviour:

“RAG crawler activity is governed by appetite and session throttling, meaning bots are lazy and will only visit the best content once; they don’t want to go more than about three pages deep. Context bias is real; if the bot retrieves unstructured content first, it will use that information and ignore subsequently structured content in the same session.” — Lily Ray, Tech SEO Connect 2025³

Lily Ray, Substack, April 2026, on RAG and misinformation:

“For a RAG-based system like Perplexity or AI Overviews, enough citations are basically all it needs to treat something as fact, regardless of whether it’s actually true.” — Lily Ray, Substack, April 2026¹³

What This Means for SEO Practitioners in 2026

[GEO: SEMANTIC TRIPLE → Subject: traditional SEO ranking | Predicate: is now the prerequisite for | Object: RAG retrieval eligibility, not the measure of success]

Four structural shifts follow directly from how RAG works.

Ranking is the entry ticket, not the destination. The query fan-out process retrieves from search indexes. A page that does not rank for any sub-query generated by fan-out is invisible to the RAG system. Most LLMs cannot render JavaScript during retrieval, and Core Web Vitals and TTFB still matter for real-time RAG.³ A JavaScript-heavy page that a RAG crawler cannot render is as invisible as an unindexed one.

The grounding budget reorders content strategy. If only the first 2,000 words and 13% of a long article are reliably retrieved, the most important definitions, the clearest answers, and the most citable claims all belong in the first quarter of the piece. This is not a formatting preference — it is a retrieval requirement.

Recency has become a structural signal. 50% of top-cited content in AI search is less than 13 weeks old.³ A page that ranked strongly in 2024 and has not been substantively updated is operating at a retrieval disadvantage in 2026 regardless of its historical authority.

Citation share is the new primary metric. Teams have lost 40–65% of their ability to drive clicks year-over-year through traditional ranking alone.² New KPIs for 2026 must include share of voice in AI citations, branded search lift, and assisted conversions alongside — not instead of — traditional rank tracking.

8 Things to Do Right Now

1. Put your most important answer in sentence one of every section. 44.2% of all LLM citations come from the first 30% of a page’s text.¹¹ Every H2 section should open with a direct, self-contained answer to the implied question. The explanation and context follow. Never lead with context and arrive at the answer — the RAG system may not wait.

2. Deploy structured data before the page is first crawled. RAG bots visit the best content once and do not return to re-crawl — structure must be present from the first touch point.³ Implement Article, FAQPage, and BreadcrumbList schema in JSON-LD on all content pages before publish.

3. Refresh high-value content every 10–12 weeks at minimum. With half of all cited content under 13 weeks old, a rolling refresh calendar is operational infrastructure. Each substantive update must include a dateModified change in the schema block and Elementor Custom HTML.

4. Write FAQ answers that function without surrounding context. FAQ sections are among the highest-leverage retrieval targets in a RAG pipeline. Each answer should be 3–6 sentences, self-contained, and written so it answers the question completely if extracted as a standalone chunk. Avoid pronouns that refer back to earlier sections.

5. Audit your content for JavaScript rendering dependency. Most LLMs cannot render JavaScript during retrieval.³ Any content that is lazy-loaded or dependent on JS execution may be invisible to RAG crawlers even if visible to a human reader. Server-side render all critical content and confirm it appears in the raw HTML.

6. Build third-party brand mentions as deliberately as link building. AI systems rely heavily on trusted third-party sources to validate brand credibility — product review sites, affiliate publishers, and reputable publications are commonly cited in AI responses.¹⁰ Reddit, Wikipedia, YouTube, and Facebook are frequently referenced in AI-generated answers. A brand that exists only on its own domain is not credible to a RAG system calibrated to cross-reference corroboration. For the full data on where AI citation traffic converts, see our AI Overviews CTR crisis report.

7. Map content against fan-out sub-queries, not just seed keywords. The sub-queries generated by query fan-out carry no MSV in any current keyword tool. Use semantic topic modelling, entity mapping, and manual query testing in AI platforms to surface the implicit questions your content should answer. A pillar post that ranks for one head term but fails to answer any of the machine-generated sub-queries associated with it will be largely invisible in RAG retrieval.

8. Separate citation tracking from ranking reports. Visibility in AI Overviews — being cited as a source, having content synthesised in the AI-generated summary — is a distinct form of search presence not captured by standard rank-tracking tools.⁸ Semrush, Ahrefs, and Similarweb now offer AI citation visibility features. Track them separately and report them as forward-looking metrics.

Frequently Asked Questions

What is RAG and why does it matter for SEO right now? RAG — Retrieval-Augmented Generation — is the architecture powering every major AI search product to retrieve live web content before generating a response. For SEO practitioners, it matters because it is the mechanism that determines whether any given piece of content is retrieved and cited in an AI-generated answer. Organic ranking is the direct prerequisite for RAG citation eligibility — a page that does not rank cannot be retrieved. Getting RAG mechanics wrong means optimising for a system that no longer governs AI visibility.

Does ranking on page one guarantee citation in AI Overviews? No — the data is definitive on this. The overlap between top-10 organic rankings and AI Overview citations collapsed from 75–76% in late 2024 to between 17% and 38% by February 2026.¹ A page can rank first organically and still be passed over if its structure is poor, its content is outdated, or it falls outside the sub-queries generated by fan-out. Ranking remains necessary but is no longer sufficient.

What is query fan-out and why can’t keyword tools track it? Query fan-out is the process by which a RAG system deconstructs a single user query into multiple sub-queries, each independently executed against a search index. 95% of these machine-generated sub-queries carry no Monthly Search Volume.³ Standard keyword research tools measure historical human search behaviour and have no mechanism to surface synthetic sub-queries RAG generates in real time. Topic coverage, entity completeness, and semantic depth are now more useful inputs than keyword volume alone.

How does the grounding budget affect long-form content? Google’s RAG process operates on a grounding budget of roughly 2,000 words, extracting only small sequential slices of a page — often as little as 13% of a long-form article.⁴ For a 6,000-word post, approximately 5,200 words may never be retrieved at all. Content that answers the key question at the top of each section is structurally advantaged over content that builds to an answer through extended context-setting.

Why did organic CTR partially recover in early 2026 if AI Overviews are still growing? Organic CTR on AI Overview queries climbed from a floor of 1.3% in December 2025 to 2.4% in February 2026 — the first reversal after 18 months of decline.⁵ The cause has not been definitively explained by published research as of May 2026. Possible contributing factors include users developing more click behaviour as they become familiar with AI Overviews and changes in which query types trigger AI Overviews. The recovery is real but partial — pre-AIO CTR baselines have not been restored.

Is GEO a separate discipline from SEO, or the same thing? Based on the available evidence, it is the same discipline with updated priorities. Robby Stein of Google confirmed in March 2026 that AI systems retrieve through Google Search.⁷ Lily Ray has consistently argued that strong SEO is still the foundation of AEO, and that ranking well in traditional organic search increases the likelihood of being cited inside AI-generated responses.¹⁰ The operational adjustments — content structure, schema, freshness, semantic depth — are extensions of core SEO practice, not a replacement for it.

What content formats does RAG favour for citation? Blogs and opinionated content now lead in citation type, as AI systems favour established frameworks of analysis.³ Structurally, content with headings, lists, and FAQ sections is most effective in AI search, and 44.2% of all LLM citations come from the first 30% of text.¹¹ Direct answers at section openings, FAQ blocks with self-contained answers, and clear semantic structure consistently outperform long-form narrative prose for RAG retrieval.

What happens if a RAG system retrieves misinformation and cites it? For a RAG-based system like Perplexity or AI Overviews, enough citations are basically all it needs to treat something as fact, regardless of whether it’s actually true.¹³ Lily Ray demonstrated this in a controlled experiment in January 2026, publishing a fabricated article about a non-existent Google core update and observing it surface as a cited AI Overview source within days. Citation volume and source corroboration matter to RAG systems independently of factual accuracy — making E-E-A-T signals and brand authority more critical, not less.

Conclusion

The shift RAG has produced in search is not primarily a technology story. It is a content standards story. For the first time, the structural qualities that experienced editors have always valued — answers that arrive early, claims that are supported, information that is current, authority that is visible — are now operationally legible to machines at scale.

The teams gaining ground in 2026 are not those who have found a new RAG trick. They are those who recognised that query fan-out is not a threat to keyword strategy — it is an invitation to build deeper, more comprehensive content than keyword volume metrics ever incentivised. Every sub-query RAG generates is an implicit question your content either answers or does not. Each answer is a citation opportunity.

The old measure was ranking. The new measure is retrieval. They are related but no longer interchangeable, and the gap between them is where the most important SEO work of 2026 is happening.

For the technical schema implementation that supports RAG citation readiness, see our guide to structured data for AI search — confirm URL is live before publish. For the full breakdown of how AI Overviews are affecting click-through rates by query type, see our AI Overviews CTR crisis report.

References

ALM Corp. “Google AI Overview Citations From Top-10 Pages Dropped From 76% to 38%.” March 2026. https://almcorp.com/blog/google-ai-overview-citations-drop-top-ranking-pages-2026/

Seer Interactive. “AIO Impact on Google CTR: September 2025 Update.” November 2025. https://www.seerinteractive.com/insights/aio-impact-on-google-ctr-september-2025-update

Ray, L. “Tech SEO Connect 2025: Summary & Latest Tech SEO Trends.” December 2025. https://lilyray.nyc/tech-seo-connect-2025-summary-takeaways/

Ray, L. “A Reflection on SEO, GEO & AI Search in 2025.” Substack, January 2026. https://lilyraynyc.substack.com/p/a-reflection-on-seo-and-ai-search

Search Engine Roundtable / Seer Interactive. “Google Click-Through Rates Improving on AI Overview Queries.” April 2026. [Note: seroundtable.com returned 403 — verify direct access before publish; source confirmed via Seer Interactive data]

iPullRank. “How Retrieval-Augmented Generation is Redefining SEO.” August 2025. https://ipullrank.com/how-retrieval-augmented-generation-is-redefining-seo

Stein, R. (VP of Product, Google Search). Interview with Zain Kahn, X, March 2026. Cited in: ROI Revolution, “March 2026 SEO News Recap.” https://roirevolution.com/blog/march-2026-seo-news-recap/

ALM Corp. Google AI Overviews Surge 58% Across 9 Industries.” March 2026. https://almcorp.com/blog/google-ai-overviews-surge-9-industries/

ALM Corp. “Google AI Overviews and Organic CTR in 2026: Latest Data, Query Patterns, and SEO Actions That Matter.” April 2026. https://almcorp.com/blog/google-ai-overviews-organic-ctr-2026/

Affiliate Summit / Ray, L. “The State of AI and SEO in 2026 with Lily Ray.” February 2026. https://www.affiliatesummit.com/blogs/the-state-of-ai-and-seo-in-2026-with-lily-ray

Position Digital. “150+ AI SEO Statistics for 2026.” Updated April 2026. https://www.position.digital/blog/ai-seo-statistics/

Heroic Rankings. “Google AI Overview Statistics: 2026 Trends and Impact.” February 2026. https://heroicrankings.com/seo/managed/google-ai-overview-statistics-2026/

Ray, L. “The AI Slop Loop.” Substack, April 2026. https://lilyraynyc.substack.com/p/the-ai-slop-loop

Ray, L. “Your GEO Strategy Might Be Destroying Your SEO.” Substack, March 2026. https://lilyraynyc.substack.com/p/your-geo-strategy-might-be-destroying

Related posts:

- SEO Industry Intelligence Report 2026: How AI Is Reshaping Search Visibility

- Is There Any Similarity Between Knowledge Graphs and Semantic Web? Here’s What SEO Professionals Need to Know.

- SEO in 2026: The Strategic Shifts That Will Redefine Search Visibility

- Beyond Keywords: The Emerging SEO Trends Set to Dominate 2026