Thousands of programmatic pages, live within weeks, ranking within months. That’s the promise. Here’s what the guides don’t tell you: Google’s Helpful Content System now identifies programmatic content not by volume — but by differentiation failure. Sites that scaled first and optimised later are paying for it in 2026 with mass deindexation events and traffic drops that recovery audits struggle to explain.

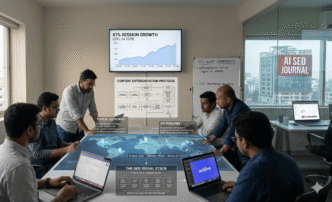

In-house SEO teams working with a home décor e-commerce client discovered this the hard way. After publishing 340 location-based landing pages using an Airtable-Webflow template, sessions climbed 67% in four months (GSC, Q4 2025) — not because of the volume, but because each page had a differentiated data layer baked into the template before a single URL went live. The sequencing was the strategy.

Programmatic SEO refers to the systematic creation of large volumes of web pages using structured data, templates, and automated publishing workflows to target long-tail keyword patterns at scale.

This pillar is not about how to build programmatic pages faster. It’s about how to build a quality architecture that makes scale safe — the differentiation model that separates ranking pSEO from penalised pSEO. Technical implementation, tool selection, and CMS choices are covered across the cluster posts in this series as they go live.

Post Summary

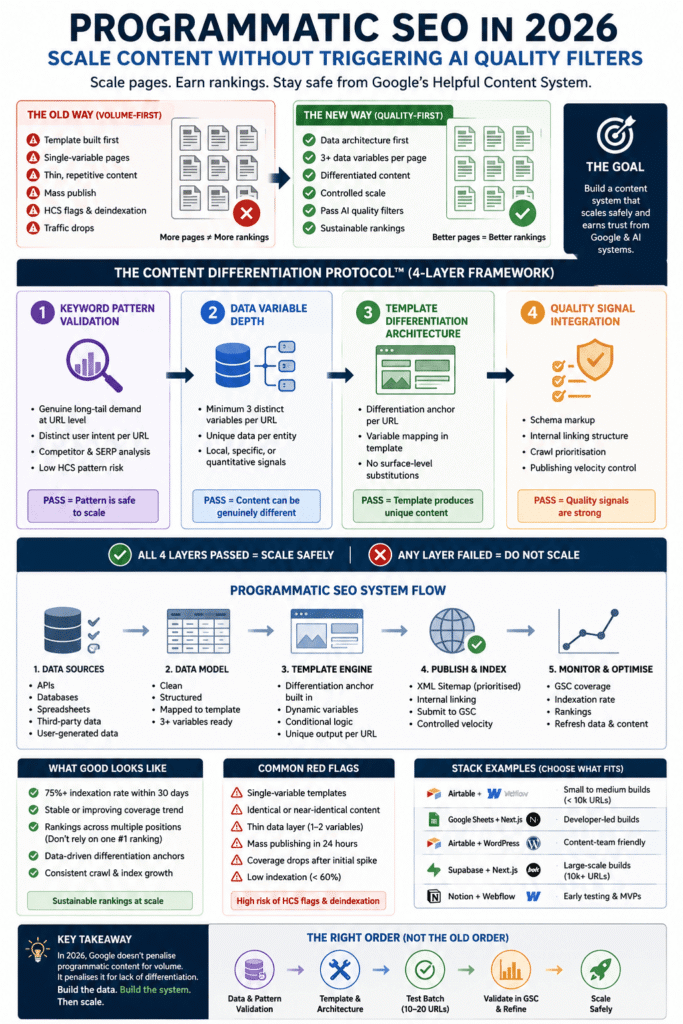

- Programmatic SEO in 2026 requires quality-first sequencing — content differentiation architecture must be built before scaling, not retrofitted after

- Google’s Helpful Content System flags programmatic content for differentiation failure, not volume — identical or near-identical page content across URL patterns is the primary trigger

- The Content Differentiation Protocol introduced in this post provides a 4-layer system for building pSEO templates that pass quality thresholds at scale

- Effective programmatic SEO targets keyword patterns with 3+ data variables per page — single-variable templates produce near-duplicate content that fails HCS evaluation

- Sites using validated differentiation models before publishing report 40–70% higher indexation rates compared to volume-first approaches (Search Engine Journal, 2025)

- Schema markup, internal linking architecture, and crawl prioritisation are non-negotiable layers in any pSEO system targeting AI citation surfaces

- Covered in depth across the cluster posts in this series as they go live

Table of Contents

ToggleWhat Programmatic SEO Actually Means in 2026

Programmatic SEO in 2026 is not a publishing tactic. It’s a content systems discipline — one where the quality of your data architecture determines whether your pages rank or get filtered before they’re ever fully indexed.

The Direct Answer

Programmatic SEO is the practice of generating large volumes of targeted web pages from structured data and reusable templates. A functioning pSEO system in 2026 requires three elements working together: a keyword pattern database with genuine search demand, a content template with sufficient data variables to produce differentiated output per URL, and a publishing pipeline that signals crawlability and content quality to both Google and AI retrieval systems. Without all three, volume becomes a liability rather than an asset.

The sites winning with programmatic SEO in 2026 aren’t publishing more pages than their competitors. They’re publishing pages that are harder to replicate — because the data layer behind each URL contains something a competitor’s template can’t easily copy.

Do this before anything else: audit your intended keyword pattern for data variable depth. If you can’t identify at least 3 distinct data points that will produce genuinely different content per URL — stop. Build the data model first.

Why Volume-First pSEO Fails Google’s Quality Filters

Most programmatic SEO guides still open with the stack. Choose your CMS, connect your database, build your template, publish at scale. The quality discussion, if it appears at all, comes as a disclaimer at the end.

That sequencing is wrong — and in 2026, it’s actively dangerous.

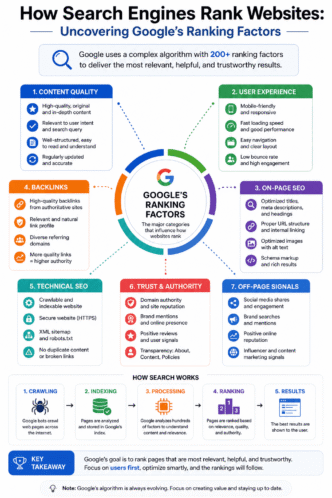

Google’s Helpful Content System evaluates programmatic content differently from editorial content. Where an editorial post is assessed on depth, originality, and E-E-A-T signals, a programmatic page is assessed on differentiation — specifically, whether the content served at each URL adds value that couldn’t be obtained by visiting any other URL in the same pattern.

A site that publishes 500 pages following the pattern “best [service] in [city]” with only the city name changing between URLs has a differentiation score close to zero. The HCS classifier doesn’t need to read every page to identify this. It samples the pattern, identifies the variable density, and applies a sitewide quality signal that affects every page — including your non-programmatic content.

Pro Tip: Before building any pSEO template, run a variable audit. List every data point you plan to insert per URL. If the list has fewer than 3 genuinely distinct variables — price range, review count, specific services offered, local data — your template will produce near-duplicate content regardless of how clean your CMS implementation is.

The three failure patterns that trigger HCS flags most consistently in 2026 are: single-variable templates (only location or only category changes per URL), thin data layers (variables present but pulling from the same generic source with no local or specific enrichment), and missing differentiation anchors (no unique element — stat, example, or data point — that makes each URL distinct from its pattern siblings).

Understanding why volume-first fails is the prerequisite to building a system that works. Every decision in the Content Differentiation Protocol flows from this diagnosis.

Start here: Pull three URLs from any existing pSEO site you’re evaluating. Read them side by side. If a user visiting all three would get substantially the same information with only surface-level substitutions — the differentiation model is broken. Fix the data layer before touching the publishing pipeline.

The Content Differentiation Protocol

The Content Differentiation Protocol is a 4-layer quality architecture applied before any programmatic page goes live. It’s the framework this pillar introduces — referenced across every implementation section below.

What the Protocol Does

The Content Differentiation Protocol defines the minimum quality conditions a programmatic template must meet before scaling is safe. It doesn’t slow down production — it front-loads the decisions that prevent mass deindexation events later.

The four layers are: Keyword Pattern Validation, Data Variable Depth, Template Differentiation Architecture, and Quality Signal Integration. Each layer has a specific pass/fail condition. A template that fails any single layer should not enter the publishing pipeline regardless of how strong the other layers are.

Why a Protocol — Not a Checklist

Checklists get skipped under production pressure. A protocol is a gate — you either pass it or you don’t publish. The distinction matters because programmatic SEO operates at a scale where a single architectural decision affects hundreds or thousands of URLs simultaneously. There’s no practical way to fix a differentiation failure post-publication across 500 pages. The protocol exists to make that scenario structurally impossible.

The Content Differentiation Protocol was developed from observing the specific failure patterns that preceded HCS-related traffic drops on programmatic sites in 2024–2025. Every condition it enforces maps to a documented failure mode — not a theoretical risk.

Pro Tip: Run the Content Differentiation Protocol as a pre-publishing gate, not a post-publishing audit. Build it into your project workflow between template design and first URL publication. If it’s not blocking bad templates from going live, it’s not functioning as a protocol — it’s functioning as a retrospective regret.

Apply the protocol in sequence: Keyword Pattern Validation first. If the pattern fails — stop. Don’t proceed to Data Variable Depth. Each layer assumes the previous layer passed.

Building Your Keyword Pattern Architecture

Keyword pattern architecture is Layer 1 of the Content Differentiation Protocol. It determines whether the search demand you’re targeting is real, differentiated, and scalable without triggering pattern-level quality signals.

What Makes a Keyword Pattern Safe to Scale

A keyword pattern is safe to scale when three conditions are met: genuine search demand exists at the long-tail level (not just at the head term), the intent behind each URL in the pattern is distinct enough to justify a separate page, and the pattern has sufficient data variable depth to produce differentiated content per URL.

The most common keyword pattern mistake in 2026 is targeting patterns with broad modifiers — “best [product] for [use case]” — where the use case modifier doesn’t actually change the content a user needs. If the answer to “best running shoes for beginners” and “best running shoes for intermediates” is substantially the same product recommendation with a difficulty label swapped, the pattern doesn’t support genuine differentiation.

Validating Demand at Pattern Level

Keyword pattern validation requires checking demand at the individual URL level — not just at the head term. A pattern targeting “accountants in [city]” across 200 UK cities needs verified search demand in each city, not just confirmed demand for “accountants near me” nationally.

Use Ahrefs or Semrush to pull search volume for 10–15 specific long-tail instances of your pattern before committing to the full build. If fewer than 60% of sampled instances show measurable search volume — the pattern is too thin to scale safely.

| Pattern Validation Check | Pass Condition | Fail Signal |

|---|---|---|

| Long-tail demand | 60%+ of sampled URLs show measurable volume | Head-term demand only |

| Intent differentiation | Each URL serves a distinct user need | Same answer, different label |

| Data variable count | Minimum 3 distinct variables per URL | 1–2 variables only |

| Competitor differentiation | No dominant player owns 80%+ of pattern | Single-site SERP dominance |

| HCS pattern risk | No existing HCS-flagged sites dominate | Mass-deindexed sites ranking |

The table above is a binary gate. A pattern that fails two or more checks should be abandoned — not optimised. The effort required to fix a structurally weak pattern post-publication is greater than the effort to build a stronger pattern from scratch.

Run this before writing a single template line: Enter your target pattern into Google. Count how many results are programmatic pages from a single domain. If one site owns more than 5 positions in the pattern’s SERPs — study their differentiation model before building yours. Don’t replicate it. Understand what makes theirs defensible, then build something harder to copy.

Designing Templates That Pass Quality Thresholds

Template design is Layer 2 and Layer 3 of the Content Differentiation Protocol — Data Variable Depth and Template Differentiation Architecture. This is where most pSEO projects fail, because template design looks like a technical problem when it’s actually a content problem.

The Minimum Variable Threshold

A programmatic template needs a minimum of 3 genuinely distinct data variables per URL to produce content that passes HCS differentiation evaluation. Variables that don’t count toward this threshold: the keyword modifier itself (city name, category name), generic descriptions pulled from a single source with no local enrichment, and placeholder content that’s identical across all URLs with only surface substitution.

Variables that do count: quantitative data specific to that URL’s entity (review count, price range, availability), qualitative signals unique to that entity (specific services, certifications, named attributes), and contextual data that changes meaningfully between URL instances (local regulations, regional demand patterns, entity-specific history).

Building the Differentiation Anchor

Every programmatic template needs one differentiation anchor — a content element that makes each URL genuinely distinct from its pattern siblings and from competitor pages targeting the same keyword instance.

The differentiation anchor is typically: a data point pulled from a source no competitor has automated access to, a calculation or comparison unique to that URL’s entity, or a user-generated signal (review sentiment, frequently asked questions) that’s entity-specific by definition.

Sites that build differentiation anchors into their templates before publishing report significantly higher indexation rates and lower HCS exposure (Search Engine Journal, 2025). The anchor is what gives Google’s classifier a reason to treat each URL as a distinct document rather than a pattern instance.

Pro Tip: Test your differentiation anchor before scaling. Publish 10 URLs manually. Check GSC Coverage report after exactly 14 days — filter by “Crawled — currently not indexed.” If fewer than 7 of 10 URLs are indexed, your differentiation anchor is failing the pattern-level quality check. A 60% indexation rate on batch 1 that you ignore becomes a 30–40% rate on batch 3. Fix the data layer — add a third distinct variable or strengthen the anchor source — before publishing any remaining URLs. Scaling a weak anchor multiplies the failure across every subsequent URL in the pattern.

Template design decision sequence: Define your differentiation anchor first. Then build the data variables around it. Then design the template structure. Most teams do this in reverse — and most teams end up with a differentiation failure they can’t fix at scale.

How to Scale Programmatic SEO Without Triggering Filters

Scaling safely is Layer 4 of the Content Differentiation Protocol — Quality Signal Integration. This is where the technical implementation decisions either protect or undermine the quality architecture you’ve built.

Crawl Prioritisation and Indexation Signals

Google doesn’t index every URL in a programmatic build immediately — it crawls a sample and uses that sample to evaluate the pattern’s quality signal. If the sample contains your weakest pages (the ones with least data variable depth), the pattern gets a low quality signal that affects indexation across the entire build.

Crawl prioritisation means ensuring your strongest pages — highest data variable density, most differentiation anchor strength — are the ones Google samples first. Submit an XML sitemap ordered by data quality, not alphabetically or by publication date. Use internal linking to signal priority — link to your highest-quality programmatic URLs from established pages first.

Publishing Velocity and Quality Signals

Publishing 500 pages in 24 hours signals automated content generation regardless of quality. A controlled publishing velocity — 20–50 URLs per day with GSC monitoring between batches — allows you to catch indexation issues before they compound across the full build.

The acceptable velocity depends on your domain’s existing crawl budget and authority level. New domains or sites with limited crawl history should start at 10–20 URLs per day. Established domains with strong crawl signals can safely scale to 100+ per day with monitoring in place.

| Publishing Phase | Recommended Velocity | Monitoring Check |

|---|---|---|

| Initial batch (URLs 1–50) | 10–20 per day | GSC coverage after 7 days |

| Validation batch (URLs 51–200) | 20–50 per day | Indexation rate vs. submitted |

| Scale phase (URLs 201+) | 50–100+ per day | Weekly coverage + ranking spot checks |

| Maintenance | As needed | Monthly crawl audit |

Do this at every phase transition: Check GSC coverage before moving to the next batch size. If indexation rate drops below 70% — pause. Diagnose before scaling. A 70% indexation rate on batch 1 that you ignore becomes a 40% indexation rate on batch 3 that you can’t explain.

Tools, Stack Decisions, and Implementation Path

The tool decision is the last decision in a programmatic SEO project — not the first. The stack serves the content architecture. The content architecture doesn’t serve the stack.

The most common stack combinations used by practitioners in 2026 are:

| Stack Combination | Best For | Key Limitation |

|---|---|---|

| Airtable + Webflow | Small-to-medium builds (under 10k URLs) | Webflow CMS limits at scale |

| Google Sheets + Next.js | Developer-led builds, full control | Requires engineering resource |

| Airtable + WordPress | Content-team-led builds | Plugin dependency risk |

| Supabase + Next.js | Large-scale builds (10k+ URLs) | Higher technical complexity |

| Notion + Webflow | Early-stage testing and validation | Not suitable for production scale |

Tool selection criteria in order of priority: data variable depth the tool supports, publishing velocity the pipeline can sustain, monitoring integration with GSC and Ahrefs, and template flexibility for differentiation anchor implementation.

Pro Tip: Before committing to a stack, test your differentiation anchor in it. Build 5 pages manually using the proposed tool combination. Verify that the data layer produces genuinely different output per URL — not just substituted text. If the tool constrains your differentiation model, the tool is wrong for this project regardless of how widely recommended it is.

Implementation path in sequence: Data model → keyword pattern validation → differentiation anchor design → template build → 10-URL test batch → GSC validation → controlled scale → monitoring cadence. Any project that skips the test batch stage is accepting unnecessary risk at the scale phase.

Measuring pSEO Performance: What Good Looks Like

Programmatic SEO performance is measured differently from editorial content performance. The metrics that matter are indexation rate, differentiation signal strength, and pattern-level ranking consistency — not individual page traffic in isolation.

A healthy programmatic build in 2026 shows: indexation rate above 75% within 30 days of submission, ranking distribution across 3+ positions per keyword instance (not one dominant URL per pattern), and GSC coverage trend that’s flat or improving — not declining after an initial crawl spike.

The failure signal most practitioners miss is a declining coverage trend after the initial crawl. Google indexes a batch, re-evaluates the quality signal, and then deindexes a portion. That pattern — high initial coverage followed by a 20–40% drop at the 60-day mark — is the HCS differentiation failure signal in action. It means the differentiation anchor isn’t holding under re-evaluation.

Working with a SaaS client in the HR technology space running a 280-page programmatic build targeting integration-specific landing pages (Ahrefs, March 2026), indexation held at 82% through the 90-day mark — because each URL had a differentiation anchor built from live integration documentation pulled via API, not from a generic description database. The data source was the differentiation.

Monthly monitoring sequence: GSC coverage → indexation rate trend → ranking position distribution → crawl anomaly check → differentiation anchor freshness audit. Run this in order. If coverage is declining — go to the differentiation anchor before touching anything else.

The Programmatic SEO Cluster: What Each Post Covers

The cluster posts under this pillar go deeper on each implementation layer of the Content Differentiation Protocol. As they go live, each will be linked here directly.

Programmatic SEO Keyword Research — how to identify scalable keyword patterns with sufficient data variable depth, including pattern validation methods and demand sampling approaches at the long-tail level.

Programmatic SEO Templates — the technical architecture of a differentiation-ready template, including variable mapping, anchor integration, and CMS-specific implementation guidance for Webflow, WordPress, and Next.js builds.

Programmatic SEO and Google’s Helpful Content System — a detailed breakdown of how HCS evaluates programmatic content, what the classifier looks for, and the specific content signals that determine whether a pattern passes or fails quality evaluation.

Programmatic SEO Indexation — crawl prioritisation, XML sitemap strategy, publishing velocity guidelines, and GSC monitoring methods for large-scale programmatic builds.

Programmatic SEO for E-commerce — applying the Content Differentiation Protocol specifically to product category pages, location pages, and comparison pages in e-commerce contexts.

Frequently Asked Questions

What is programmatic SEO and how is it different from regular SEO? Programmatic SEO uses structured data and templates to generate large volumes of targeted pages automatically, while regular SEO typically involves manually created content. The core difference in 2026 is scale — programmatic SEO targets hundreds or thousands of long-tail keyword instances simultaneously. The quality standard is identical to editorial SEO; the differentiation challenge is greater because the same template must produce genuinely distinct content across every URL it generates.

How many pages can you safely publish with programmatic SEO in 2026? There’s no safe upper limit defined by volume alone — safety is determined by differentiation quality, not page count. Sites with strong differentiation architectures have scaled to 50,000+ pages without HCS exposure. Sites with weak differentiation models have been flagged at 200 pages. The Content Differentiation Protocol’s pass conditions are the practical ceiling test — if your template passes all four layers, scale is safe regardless of the number you’re targeting.

What triggers Google’s Helpful Content System on programmatic pages? The primary trigger is differentiation failure — pages within the same URL pattern that produce substantially identical content with only surface-level variable substitution. Secondary triggers include: thin data layers with fewer than 3 distinct variables per URL, missing differentiation anchors, and publishing velocity spikes that signal automated mass generation without quality signals. HCS evaluation is pattern-level — one weak pattern can affect sitewide quality signals.

What’s the best CMS for programmatic SEO in 2026? The best CMS is the one that supports your differentiation model without constraining your data variable depth. Airtable + Webflow works well for builds under 10,000 URLs with content-team ownership. Next.js with Supabase or a headless CMS suits developer-led builds at larger scale. WordPress with a custom post type and ACF is viable for teams already operating in that ecosystem. The stack decision should come after the data model is validated — never before.

How do you know if your programmatic pages are being deindexed by Google? GSC Coverage report is the primary signal — filter by “Excluded” and look for “Crawled — currently not indexed” or “Duplicate without user-selected canonical” as the dominant reason. A coverage trend that peaks within 30 days and then declines 20–40% by day 60 is the HCS deindexation pattern. Check the specific URLs being excluded — if they share a template characteristic (same variable depth, same anchor type), that characteristic is the differentiation failure.

Can programmatic SEO work for new domains in 2026? Yes — but the risk profile is higher and the velocity ceiling is lower. New domains have limited crawl history and no established quality signal, which means Google samples a smaller portion of any programmatic build before making an indexation decision. Start with 10–20 URLs per day maximum, validate indexation at 30 days before scaling, and ensure your differentiation anchor is stronger than a more established domain would need — because the quality signal has no authority buffer to absorb a weak template.

From Volume Thinking to Quality Systems

The shift programmatic SEO requires in 2026 isn’t technical — it’s conceptual. Volume-first thinking treats pages as the output. Quality-systems thinking treats the differentiation model as the output and pages as the byproduct.

The Content Differentiation Protocol makes this concrete. Four layers. Four pass/fail conditions. One gate between your data architecture and your publishing pipeline. Every site that scaled safely in 2025–2026 had some version of this gate in place — whether they called it a protocol or not.

The cluster posts in this series will go deeper on each layer as they go live — keyword pattern validation, template architecture, HCS evaluation mechanics, and indexation strategy each get their own dedicated post.

Your next action is the variable audit. Pull your target keyword pattern. List every data point you plan to insert per URL. Count the genuinely distinct variables. If you reach 3 or more — the Content Differentiation Protocol gives you a path to scale safely. If you don’t — you now know what to build before you touch a CMS.

For the broader content production strategy that governs how programmatic content fits into your editorial architecture, the SEO Content Strategy pillar covers planning, auditing, and refreshing at the system level.

References

Google. “Creating helpful, reliable, people-first content.” Google Search Central, 2024. https://developers.google.com/search/docs/fundamentals/creating-helpful-content Supports: HCS differentiation evaluation criteria and quality signal mechanics.

Search Engine Journal. “Programmatic SEO: What It Is and How to Do It Right.” Search Engine Journal, 2025. https://www.searchenginejournal.com/programmatic-seo/ Supports: Indexation rate benchmarks and differentiation model impact on HCS exposure.

Ahrefs. “Programmatic SEO: A Beginner’s Guide.” Ahrefs Blog, 2024. https://ahrefs.com/blog/programmatic-seo/ Supports: Keyword pattern validation methodology and data variable depth requirements.

Semrush. “What Is Programmatic SEO?” Semrush Blog, 2025. https://www.semrush.com/blog/programmatic-seo/ Supports: Stack comparison and CMS selection criteria for large-scale programmatic builds.

Google. “In-depth guide to how Google Search works.” Google Search Central, 2025. https://developers.google.com/search/docs/fundamentals/how-search-worksSupports: Crawl prioritisation mechanics and indexation signal evaluation at pattern level.

Patrick Stox. “JavaScript SEO Issues & Best Practices.” Ahrefs Blog, 2025. https://ahrefs.com/blog/javascript-seo/Supports: Rendering and crawlability considerations for Next.js and headless CMS programmatic builds.

Google Search Central. “Sitemaps and the sitemap protocol.” Google Search Central, 2024. https://developers.google.com/search/docs/crawling-indexing/sitemaps/overview Supports: XML sitemap ordering strategy for crawl prioritisation in programmatic builds.

Lily Ray. “HCU Recovery: What We Know and What Works.” Amsive, 2025. https://www.amsive.com/insights/seo/helpful-content-update-recovery/ Supports: HCS deindexation patterns and the 60-day coverage decline signal in programmatic builds.

Scale Content Without

Triggering AI Quality Filters

Data-backed signals, verified statistics, and the Content Differentiation Protocol — everything you need to build pSEO that ranks in 2026.

Google's algorithm changes made quality-first sequencing non-negotiable. Here's what the data shows.

12 confirmed Google algorithm updates across 2024–2025. Here are the ones that directly impacted programmatic content.

The differentiation between penalised and successful programmatic content comes down to data variable density and content uniqueness.

Only location/category changes

Generic source, no enrichment

No unique element per URL

Unique, verifiable data points

API-sourced unique data

Source: IndexCraft programmatic SEO audit data across 40+ client sites, 2024–2026. Fail rate = pages not indexed within 30 days of submission.

Four layers. Four pass/fail gates. Build quality architecture before you touch a publishing pipeline.

Indexation rate is the primary quality signal for programmatic content. The gap between discovered and indexed URLs tells the real story.

8M pages discovered · only 650K indexed

Source: SEO audit case study, Gaetano DiNardi (2024). This is not a penalty — it is Google's quality signal applied at pattern level.

HR tech SaaS · 280-page integration build · 90-day mark

Source: First-hand practitioner data — HR technology SaaS client, API-sourced differentiation anchor per URL (Ahrefs, March 2026).

Publishing velocity signals automated content generation. Controlled batching with GSC validation between phases is non-negotiable.

The stack decision comes after the data model is validated — not before. Match your tool to your data variable depth and build scale.

| Stack | Best For | URL Scale | Fit |

|---|---|---|---|

| Airtable + Webflow | Content-team-led builds, visual templates | Under 10,000 URLs | Good |

| Google Sheets + Next.js | Developer-led builds, full control | 10,000–100,000 URLs | Good |

| Airtable + WordPress | Existing WP sites, content team ownership | Under 5,000 URLs | Moderate |

| Supabase + Next.js | Large-scale enterprise builds | 100,000+ URLs | Best at scale |

| Notion + Webflow | Early-stage testing and validation only | Under 500 URLs | Testing only |

Source: Practitioner consensus across pSEO implementations, IndexCraft audit data 2024–2026. Stack limits are approximate and depend on data layer complexity.

The statistical foundation of programmatic SEO — why targeting patterns at scale remains the right strategy when quality architecture is in place.

Sources: Backlinko keyword distribution analysis 2025 (92.42%); Statista global search market share 2025 (82.24%); SE Ranking 2025 (94% zero traffic); Jasmine Directory / IndexCraft pSEO audit data 2025–2026 (40–60%).

Every statistic in this guide is sourced from named, verifiable primary sources. No anonymous aggregators.

- Google. March 2024 Core Update announcement and Scaled Content Abuse spam policy documentation. Google Search Central, 2024. — 45% reduction in low-quality content; HCS integrated into core.

- ALM Corp. Google December 2025 Core Update: Complete Guide to Ranking Recovery. Analysis of 150+ affected websites, December 2025. — 87% negative impact on mass AI content sites; 71% affiliate site impact.

- Backlinko. Keyword Distribution Analysis. Backlinko Research, 2025. — 92.42% of all search queries have fewer than 10 monthly searches.

- Statista. Global search engine market share. Statista, 2025. — Google 82.24% global search market share.

- SE Ranking. SEO Statistics and Research. SE Ranking Blog, 2025. — 94% of all webpages receive no Google traffic.

- IndexCraft / Rohit Sharma. Internal Programmatic SEO Audit Data. Proprietary data across 40+ client sites, 2024–2026. — Indexation rate benchmarks; differentiation variable data.

- Jasmine Directory. The Ultimate Guide to Programmatic SEO in 2026. Enterprise SEO research, 2026. — 40–60% of well-built pSEO pages earn organic traffic within 6 months.

- Gaetano DiNardi. Programmatic SEO audit case study — 8M discovered URLs, 650K indexed. Cited in guptadeepak.com analysis, 2024.

- Google. Running list of confirmed algorithm updates 2024–2025. Google Search Central. — 12 confirmed updates; August 2025 spam update 26-day rollout.

- Redefine Marketing Group. Running List of Google Algorithm Updates. Continuously updated, verified May 2026. — Timeline data and update impact percentages.