Ever launched a website only to discover Google indexed your staging pages, admin panels, and embarrassing test content? That nightmare scenario happens more often than you’d think—and it’s usually because of a misconfigured robots.txt file.

Robots.txt SEO is one of those invisible powerhouses that either protects your site’s crawl budget or accidentally tanks your rankings. According to a 2024 Search Engine Journal study, 34% of websites have critical robots.txt errors that block important pages from search engines. That’s like locking your storefront while wondering why customers aren’t coming in.

This guide breaks down exactly what to block, what to allow, and how to avoid the catastrophic mistakes that cost sites thousands in lost traffic. Let’s turn your robots.txt from a liability into an asset.

Table of Contents

ToggleWhat Is a Robots.txt File and Why It Matters

A robots.txt file is a plain text document that tells search engine crawlers which pages they can and cannot access on your site. It lives in your website’s root directory and acts as a bouncer at a nightclub—deciding who gets in and who stays outside.

The file uses crawl directives to communicate with bots. Google, Bing, and other search engines check this file before crawling your site, respecting the rules you’ve set (though they’re not legally required to—it’s more like polite convention).

Why does this matter? Because search engines allocate a limited crawl budget to each site. If Googlebot wastes time crawling duplicate pages, admin areas, or irrelevant content, it might miss your valuable pages entirely. Your technical SEO fundamentals should always include proper robots.txt configuration.

How Does Robots.txt Work?

When a search engine bot arrives at your site, it first looks for yoursite.com/robots.txt. If it finds the file, it reads the instructions before proceeding. If it doesn’t find the file, the bot assumes everything is fair game and crawls freely.

The file contains user-agent specifications (which bot the rule applies to) followed by Allow or Disallow rules. Each directive tells the bot whether it can access specific URLs or directories.

Here’s the twist: robots.txt is a suggestion, not a command. Well-behaved bots like Googlebot follow the rules religiously. Bad bots—like scrapers and spammers—completely ignore them. That’s why robots.txt should never be used for security; it’s purely a crawl management tool as explained in Google’s official documentation.

Pro Tip: Never put sensitive information in robots.txt. The file is publicly accessible to anyone, including hackers and competitors who want to see exactly what you’re trying to hide.

Understanding User-Agent Directives

The user-agent line specifies which bot your rule applies to. Different search engines use different crawlers, and you can target them individually or all at once.

User-agent: * means the rule applies to all bots. User-agent: Googlebot targets only Google’s crawler. User-agent: Bingbot speaks specifically to Bing’s bot.

Why target specific bots? Maybe you want Google to crawl everything but block aggressive crawlers that hammer your server. Or perhaps you’re running tests and only want certain engines to see particular content. Understanding user-agent targeting gives you surgical control over who accesses what.

What Should You Block in Robots.txt?

Admin and Login Pages

Block your WordPress admin panel, login pages, and any backend functionality. These pages waste crawl budget and create security risks if publicly indexed.

User-agent: *

Disallow: /wp-admin/

Disallow: /wp-login.php

According to Moz’s 2024 technical SEO research, 41% of WordPress sites leave admin areas crawlable, creating unnecessary strain on servers and exposing sensitive URLs.

Duplicate Content and URL Parameters

E-commerce sites with filter parameters, session IDs, or tracking codes create infinite duplicate content. Block these parameter-based URLs to prevent crawl budget waste.

Disallow: /*?*

Disallow: /*?sid=

Disallow: /print/

Your technical SEO strategy should address URL parameters through both robots.txt and canonical tags for comprehensive duplicate content management.

Thank You and Confirmation Pages

Post-purchase pages, form confirmation pages, and checkout steps serve no SEO value. Block them to focus crawl budget on money-making content.

Disallow: /checkout/

Disallow: /thank-you/

Disallow: /cart/

These pages don’t rank anyway—they’re one-time use for visitors who’ve already converted. No reason to waste Googlebot’s time.

Search Results and Internal Search Pages

Internal search result pages create infinite crawlable URLs with thin content. Block your site search directories aggressively.

Disallow: /search/

Disallow: /?s=

Disallow: /*?q=

This prevents indexation nightmares where Google crawls thousands of worthless search result pages instead of your actual content.

Staging and Development Sites

If you run staging environments on subdirectories or test areas, block them completely. Nothing tanks credibility faster than searchers finding your broken beta version.

Disallow: /staging/

Disallow: /dev/

Disallow: /test/

Better yet, use password protection on staging sites so robots.txt isn’t your only defense.

PDF Files and Documents (Selectively)

Block PDFs that duplicate your web content or contain outdated information. But allow unique, valuable PDFs that serve as resources.

Disallow: /*.pdf$

This is judgment call territory. Some PDFs deserve to rank; others just create confusion when they outrank your main pages.

What Should You Allow in Robots.txt?

JavaScript and CSS Files

Never block your CSS and JavaScript. Google needs these files to render your pages properly and understand user experience signals.

Historically, some SEOs blocked /wp-includes/ on WordPress sites, accidentally blocking critical rendering resources. Google explicitly warns against this in their JavaScript SEO guide.

Allow: /wp-includes/js/

Allow: /wp-includes/css/

Your technical SEO implementation must ensure crawlers can fully render your pages—and that requires access to styling and scripts.

Images (Usually)

Allow images unless you specifically don’t want them indexed. Google Image Search drives substantial traffic for visual content and e-commerce.

Allow: /wp-content/uploads/

Blocking images made sense in 2010 when image bandwidth mattered. In 2025, image search is a traffic goldmine for the right businesses.

Public-Facing Content

Anything you want to rank should obviously be allowed. This includes blog posts, product pages, service pages, and landing pages.

You don’t need explicit Allow directives for most content—if it’s not disallowed, it’s allowed by default. But strategic Allow statements can override previous Disallow rules for specific subdirectories.

Robots.txt Examples for WordPress

Basic WordPress Robots.txt

User-agent: *

Disallow: /wp-admin/

Disallow: /wp-login.php

Allow: /wp-admin/admin-ajax.php

Sitemap: https://yoursite.com/sitemap.xml

This configuration blocks admin access while allowing AJAX calls that power WordPress functionality. The sitemap reference helps crawlers find your XML sitemap instantly.

E-commerce WordPress Robots.txt

User-agent: *

Disallow: /cart/

Disallow: /checkout/

Disallow: /my-account/

Disallow: /*?*

Allow: /wp-content/uploads/

Sitemap: https://yoursite.com/sitemap.xml

WooCommerce stores need to block cart and checkout pages while allowing product images. Parameter blocking prevents filter combinations from creating duplicate content chaos.

Media/Blog WordPress Robots.txt

User-agent: *

Disallow: /wp-admin/

Disallow: /trackback/

Disallow: /xmlrpc.php

Allow: /wp-content/uploads/

User-agent: Mediapartners-Google

Allow: /

Sitemap: https://yoursite.com/sitemap.xml

Media sites should allow all images while blocking trackback spam URLs. The Mediapartners-Google agent allows AdSense crawling for ad optimization.

How to Write Robots.txt File Correctly

Start with User-Agent Declaration

Every robots.txt file needs a user-agent line before directives. Without it, crawlers don’t know who you’re talking to.

User-agent: *

Multiple user-agent blocks let you set different rules for different bots. Google might get full access while aggressive crawlers get restricted.

Use Wildcards Strategically

The asterisk * wildcard matches any sequence of characters. It’s powerful but dangerous—use it carefully to avoid accidentally blocking important content.

Disallow: /*?

This blocks all URLs with query parameters. Great for parameter pollution, catastrophic if you use parameters for legitimate page variations.

Always Include Your Sitemap

Adding your XML sitemap URL helps search engines discover your content structure immediately.

Sitemap: https://yoursite.com/sitemap.xml

Multiple sitemaps? List them all. More information for crawlers means better understanding of your site architecture. This connects directly to your technical SEO fundamentals around discoverability.

Test Before Going Live

Google Search Console offers a robots.txt tester that shows exactly which URLs your file blocks. Use it religiously before deploying changes, as recommended in Google’s testing documentation.

1. Go to Google Search Console

2. Select your property

3. Navigate to robots.txt Tester

4. Paste your code

5. Test specific URLs

A single typo can block your entire site. Test obsessively.

Common Robots.txt Mistakes That Kill Rankings

Blocking Important Pages

The #1 disaster: accidentally blocking pages you want indexed. This usually happens with overly broad wildcards or inherited rules you didn’t understand.

A 2024 Ahrefs study found that 23% of sites accidentally block some valuable content through robots.txt errors. That’s nearly one in four sites sabotaging their own SEO.

Check your Search Console Coverage report regularly. Blocked pages show up as errors with clear explanations.

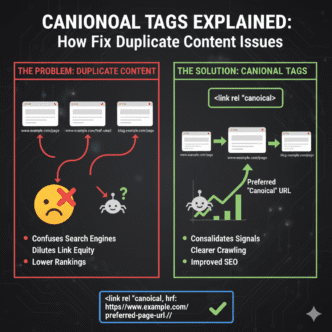

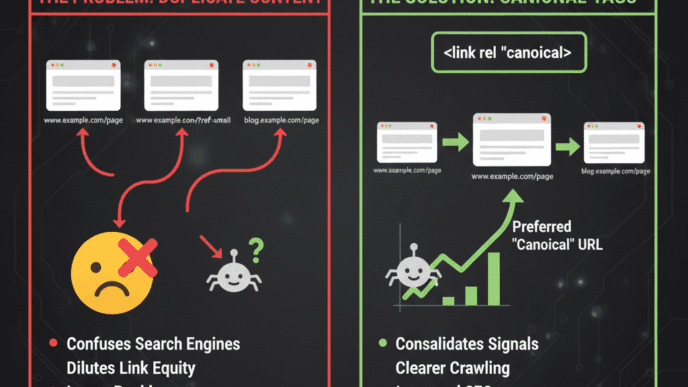

Confusing Robots.txt with Noindex

Robots.txt blocks crawling; noindex meta tags block indexing. They’re not interchangeable, and mixing them up causes major problems.

If you block a page in robots.txt, Google can’t crawl it to see the noindex tag—meaning the URL might still appear in search results with no description. Use noindex tags for pages you want crawled but not indexed, explained thoroughly in your technical SEO guide.

Using Robots.txt for Security

Robots.txt is public. Anyone can read it. Putting sensitive directories in your robots.txt file literally broadcasts “here’s where the good stuff is hidden.”

Use actual password protection, server-level restrictions, or proper authentication for sensitive content. Robots.txt is for crawl management, not security.

Pro Tip: Attackers actively scan robots.txt files looking for hidden directories. If you disallow /admin-secret-area/, you’ve just told hackers exactly where to probe.

Forgetting About Subdirectories

Rules apply to the exact path specified. /blog/ blocks the blog directory; /blog blocks URLs starting with “blog” (including /blogpost/ or /blogs/). The trailing slash matters.

Disallow: /blog/ # Blocks only /blog/ directory

Disallow: /blog # Blocks /blog, /blogs, /blogpost, everything

This subtle difference has nuked entire website sections when SEOs got sloppy with syntax.

Not Updating After Site Changes

Your robots.txt file should evolve with your site. Launch a new product category? Check if it’s crawlable. Migrate to HTTPS? Update your sitemap URLs.

According to Search Engine Land’s 2024 survey, 56% of sites never update their robots.txt after initial setup—even after major redesigns or platform migrations.

Advanced Robots.txt Techniques

Crawl-Delay Directive

Some aggressive bots hammer servers with rapid requests. The Crawl-delay directive tells them to slow down between page requests.

User-agent: *

Crawl-delay: 10

Google ignores Crawl-delay (they manage crawl rate through Search Console settings), but Bing and other engines respect it. Use it sparingly—too high a delay means slow indexing.

Blocking Specific Bots

Some bots provide no value and just burn resources. You can block them individually while allowing legitimate crawlers.

User-agent: BadBot

Disallow: /

User-agent: Googlebot

Allow: /

This nuclear option blocks “BadBot” entirely while ensuring Google has full access. Useful for scraper bots that ignore reasonable crawl speeds.

Dynamic Robots.txt Generation

Large sites with complex needs often generate robots.txt files dynamically through server-side scripts. This allows conditional rules based on user agents, geolocation, or current crawl patterns.

Platforms like Shopify and enterprise CMSs often handle this automatically, adjusting rules based on traffic patterns and crawler behavior.

Robots.txt vs Meta Robots Tags

When to Use Robots.txt

Use robots.txt to prevent crawling entirely. Perfect for pages that waste crawl budget or contain no unique value—admin areas, search results, filter variations.

Robots.txt works at the site level, providing broad directives that apply to entire directories or URL patterns. It’s efficient for blocking large sections with simple rules.

When to Use Meta Robots Tags

Use meta robots tags when you want crawlers to access pages but not index them. Good for pages that need to pass PageRank through links but shouldn’t appear in search results.

<meta name="robots" content="noindex, follow">

Meta tags work at the page level, giving precise control over individual URLs. Essential for nuanced SEO strategies that robots.txt can’t handle.

Can You Use Both?

Yes, but be strategic. Blocking with robots.txt prevents crawlers from seeing your noindex tag—creating the “blocked resource” problem where Google shows the URL anyway.

If you need both crawl control and index control, use noindex tags and remove robots.txt blocks. Let crawlers access the page, read the noindex tag, and move on. Your technical SEO fundamentals should cover this distinction clearly.

How to Check If Your Robots.txt Is Working

Google Search Console Testing

Search Console’s robots.txt tester remains the gold standard for validation. It shows exactly what Googlebot sees and which URLs your file blocks.

Test your most important URLs individually. If your homepage is blocked, you’ll see it immediately. If your product pages accidentally match a wildcard, the tester catches it.

Manual Testing

Visit yoursite.com/robots.txt in a browser. If you see your rules, the file is accessible. If you get a 404 error, the file isn’t where it should be.

Check that the file is plain text UTF-8 encoded without BOM. Microsoft Word documents saved as .txt files cause parsing errors—use a proper text editor.

Coverage Report Monitoring

Search Console’s Coverage report shows pages blocked by robots.txt under the “Excluded” section with the reason “Blocked by robots.txt.”

Review this regularly. If important pages appear here, you’ve got a problem. If only junk appears, your configuration is working perfectly.

Robots.txt and AI Crawler Management

The New Bot Landscape

2024 brought an explosion of AI training bots from OpenAI, Anthropic, Google’s Gemini, and dozens of others. These crawlers consume massive bandwidth scraping content for language model training.

Some respect robots.txt; others ignore it entirely. The landscape changes weekly as new AI companies launch crawlers without warning.

Blocking AI Bots Selectively

You can block specific AI crawlers while allowing traditional search bots:

User-agent: GPTBot

Disallow: /

User-agent: CCBot

Disallow: /

User-agent: Googlebot

Allow: /

This strategy blocks OpenAI’s GPTBot and Common Crawl while keeping Google access. Note that new AI bots appear constantly—staying current requires monitoring.

The SEO Implications

Blocking AI crawlers might protect your content from training data scraping, but it could also exclude you from AI-powered search features. Google’s AI Overviews, Bing’s Copilot, and other AI search tools need content access to function.

This is evolving territory. Most SEOs currently allow AI crawlers from major search engines (Google, Bing) while blocking third-party AI trainers. The strategy will mature as AI search becomes more important.

Real-World Robots.txt Case Study

A mid-sized SaaS company came to me with mysterious traffic drops. Organic visits fell 40% over three months despite no algorithm updates or penalties.

The culprit? A developer had “cleaned up” their robots.txt file, adding a wildcard rule to block URL parameters:

Disallow: /*?*

This seemed smart—it blocked filter combinations and tracking parameters. But their entire knowledge base used ?article=123 parameters for article IDs. The new rule blocked 847 help articles from crawling.

We revised the robots.txt to block specific parameters while allowing article IDs:

Allow: /*?article=

Disallow: /*?*

Within six weeks, blocked articles reindexed and traffic recovered to previous levels. A single character—adding that Allow line—restored 40% of their organic traffic.

Lesson: Test every robots.txt change against your actual URL structure. What seems logically sound can be practically catastrophic if you don’t understand how your site generates URLs.

Robots.txt Best Practices Checklist

Here’s your implementation checklist for bulletproof robots.txt SEO:

- Place robots.txt in your root directory (

yoursite.com/robots.txt) - Start with user-agent declarations before any directives

- Block admin areas, login pages, and backend functionality

- Block duplicate content, URL parameters, and search result pages

- Allow CSS, JavaScript, and rendering resources

- Allow images unless specifically undesirable

- Include XML sitemap URL for faster discovery

- Use wildcards carefully—test extensively before deployment

- Never use robots.txt for security or sensitive content

- Test in Google Search Console before going live

- Monitor Coverage reports for blocked important pages

- Update robots.txt when site structure changes

- Consider AI crawler management strategy

- Document why each rule exists for future reference

Tools for Managing and Testing Robots.txt

Google Search Console

The essential free tool for robots.txt testing and validation. Shows exactly what Googlebot sees and flags syntax errors immediately.

Access it under Legacy Tools & Reports > robots.txt Tester. The interface is dated but functionality is perfect. Test before every deployment.

Screaming Frog SEO Spider

This desktop crawler simulates search engine behavior, showing which pages your robots.txt blocks during site audits. The free version handles up to 500 URLs; paid version is unlimited.

Run Screaming Frog against your staging site before launch to catch robots.txt conflicts with your sitemap or internal linking structure. It integrates beautifully with your broader technical SEO workflows.

Robots.txt Generators

WordPress plugins like Yoast SEO and Rank Math automatically generate and manage robots.txt files. They provide user-friendly interfaces for common rules without requiring manual syntax writing.

For simple sites, these work great. For complex sites with nuanced needs, you’ll eventually need to customize beyond what generators offer.

Comparison: Robots.txt Management Tools

| Tool | Best For | Price | Key Feature |

|---|---|---|---|

| Google Search Console | Testing & validation | Free | Official Googlebot testing |

| Screaming Frog | Site audits | Free/£149/year | Full crawl simulation |

| Yoast SEO | WordPress automation | Free/$99/year | Visual rule builder |

| Netpeak Spider | Enterprise audits | $20/month | Bulk URL testing |

Robots.txt Template for Different Site Types

Small Business Website

User-agent: *

Disallow: /wp-admin/

Disallow: /wp-login.php

Allow: /wp-admin/admin-ajax.php

Sitemap: https://yoursite.com/sitemap.xml

Basic protection for WordPress installations without complex requirements.

E-commerce Store

User-agent: *

Disallow: /cart/

Disallow: /checkout/

Disallow: /my-account/

Disallow: /*?*

Allow: /*?product=

Allow: /wp-content/uploads/

Sitemap: https://yoursite.com/sitemap.xml

Blocks transactional pages while allowing product parameters and image crawling.

News/Media Website

User-agent: *

Disallow: /wp-admin/

Disallow: /author-admin/

Disallow: /search/

Allow: /wp-content/uploads/

User-agent: Mediapartners-Google

Allow: /

Sitemap: https://yoursite.com/sitemap.xml

Prioritizes image access for visual content while allowing Google AdSense optimization.

Monitoring Robots.txt Performance

Coverage Report Analysis

Check your Search Console Coverage report monthly. Look for unexpected pages in the “Blocked by robots.txt” section.

If blog posts or product pages appear here, investigate immediately. If only admin pages and search results appear, your configuration works as intended.

Crawl Stats Review

Search Console’s Crawl Stats show daily crawl requests, bytes downloaded, and response times. Effective robots.txt should reduce crawl requests on blocked sections while maintaining healthy crawling of important content.

If crawl requests spike on blocked directories, either your robots.txt isn’t working or bots are ignoring it. Time to investigate.

Log File Analysis

Server logs reveal which bots accessed which pages regardless of robots.txt instructions. Compare log data against your robots.txt rules to identify non-compliant crawlers.

Log analysis is advanced but powerful—it’s the only way to see what bad bots are actually doing versus what they should be doing per your robots.txt directives.

FAQ: Robots.txt SEO

Where should the robots.txt file be located on my website?

The robots.txt file must be in your root directory at yoursite.com/robots.txt. It cannot be in subdirectories, subdomains, or renamed. Search engines look for it specifically at the root level, and if it’s not there, they assume all content is crawlable. For HTTPS sites, the file should be accessible via the HTTPS version of your domain.

Can robots.txt completely prevent pages from appearing in search results?

No, robots.txt only prevents crawling, not indexing. If other sites link to a blocked page, Google may still index it (without description) based on those external signals. To prevent indexation, use noindex meta tags or X-Robots-Tag HTTP headers instead. Robots.txt is for crawl management, not index management—a critical distinction many SEOs miss.

How long does it take for robots.txt changes to take effect?

Google typically recognizes robots.txt changes within hours as Googlebot checks the file regularly. However, previously blocked pages might take days or weeks to get recrawled and indexed. You can speed this up by requesting indexing through Search Console’s URL Inspection tool. For critical changes, test thoroughly before deployment since mistakes can immediately block important content.

What happens if I don’t have a robots.txt file?

Without a robots.txt file, search engines assume everything is crawlable and proceed without restrictions. This isn’t necessarily bad—many sites operate fine without one. However, you lose control over crawl budget allocation and may waste resources on crawlers accessing low-value pages. Most sites benefit from at least a basic robots.txt file blocking admin areas.

Should I block bad bots or scrapers in robots.txt?

You can try, but malicious bots typically ignore robots.txt entirely. Blocking bad bots in robots.txt provides minimal protection while potentially advertising sensitive directories to attackers. Use server-level IP blocking, rate limiting, or Web Application Firewalls for actual bot protection. Robots.txt is only effective against polite, well-behaved crawlers.

Can I use robots.txt to control crawl speed?

The Crawl-delay directive works with some bots (Bing, Yandex) but not Google. Google manages crawl rate through Search Console settings under Crawl Rate Limiting. If server load is an issue, adjust settings in Search Console rather than relying on Crawl-delay, which has inconsistent support across different search engines.

Final Thoughts: Master Your Crawl Destiny

Robots.txt SEO is boring, technical, and easy to mess up—which is exactly why it matters. While competitors obsess over backlinks and content, a solid robots.txt file quietly ensures crawlers focus on your best pages instead of wasting time on junk.

Start simple. Block admin areas, duplicate content, and thank-you pages. Allow CSS, JavaScript, and images. Include your sitemap URL. Test obsessively in Search Console before deployment.

As your site grows, refine your approach. Add wildcard patterns for URL parameters. Block aggressive bots selectively. Monitor Coverage reports for unintended blocks. Update rules when site structure changes.

The sites that rank consistently don’t just have great content—they have technical foundations that make that content discoverable. Your robots.txt file is part of that foundation, working invisibly to maximize every precious crawl budget dollar.

Review your robots.txt today. Test it. Fix any issues. Your future organic traffic will thank you—even if no one else notices the work you’ve done. That’s the nature of great technical SEO fundamentals: invisible when done right, catastrophic when done wrong.