When Jeremy Howard proposed the llms.txt standard in September 2024, it promised to revolutionize how AI systems access website content. Fast forward to November 2025, and the verdict is in: llms.txt has failed to gain meaningful adoption, with zero major AI platforms supporting it and mounting evidence suggesting it may never become relevant.

But here’s the twist: while llms.txt languishes in obscurity, a competing standard—Anthropic’s Model Context Protocol (MCP)—has achieved explosive growth and official backing from industry giants. This report examines what went wrong with llms.txt, why MCP is winning, and what SEO professionals should actually focus on for AI visibility in 2025.

Table of Contents

Toggle

The Promise vs. The Reality

What llms.txt Was Supposed to Do

The concept seemed logical: create a simple markdown file at your domain root (yoursite.com/llms.txt) that tells AI systems which content matters most. Jeremy Howard’s aim was to make it easier for LLMs to parse large volumes of website information from a single location, as parsing unstructured HTML is slow and error-prone for models.

Think of it as a curated menu for AI crawlers—instead of letting them wander your entire site, you’d highlight your most important pages in a clean, markdown format.

The llms.txt file would include:

- Brief site description

- Links to key content areas

- Structured navigation for AI systems

- Optional full-text markdown versions

The Harsh Reality: Nobody’s Reading It

Google’s Official Position (June 2025):

Google’s John Mueller stated: “No AI system currently uses llms.txt”, and went further to explain “It’s super-obvious if you look at your server logs. The consumer LLMs/chatbots will fetch your pages—for training and grounding, but none of them fetch the llms.txt file”.

Mueller didn’t stop there. He compared LLMs.txt to the keywords meta tag, a feature that once let site owners declare what their pages were about—which search engines abandoned years ago due to manipulation and unreliability.

Google’s Gary Illyes (July 2025):

At the Google Search Central Deep Dive event, Gary Illyes made Google’s position even clearer: “Google doesn’t support LLMs.txt and isn’t planning to”, emphasizing that normal SEO practices are sufficient for ranking in AI Overviews.

The Adoption Numbers: A Statistical Disaster

Top 1,000 Websites Globally

A comprehensive scan of the top 1,000 most visited websites globally found a 0.3% adoption rate as of June 2025—just 3 out of 1,000 websites.

More damning: Zero major consumer platforms like Google, Facebook, Amazon, or mainstream news sites have adopted it.

Total Internet Adoption

According to NerdyData, only 951 domains (a tiny fraction of the web) had published an llms.txt file as of July 2025.

While community-maintained directories claim higher numbers, the pattern is unmistakable: llms.txt has achieved rapid penetration in developer tools, AI companies, technical documentation, and SaaS platforms, while remaining completely absent from the mainstream web.

Translation: Only tech-obsessed early adopters are experimenting with it—not real businesses seeing real results.

Real-World Testing: The Evidence Speaks

Search Engine Land’s 8-Month Experiment

As one of the industry’s leading SEO publications, Search Engine Land conducted a rigorous test of llms.txt from March to October 2025.

The Results:

Search Engine Land implemented llms.txt to see whether it offers any meaningful advantages in AI visibility and traffic, but did not find a correlation between implementing llms.txt and improved performance in AI results.

Their server logs revealed the smoking gun: From mid-August to late October 2025, the llms.txt page received zero visits from Google-Extended bot (Google’s AI crawler), GPTbot (OpenAI’s crawler), PerplexityBot, or ClaudeBot.

While traditional crawlers like Googlebot visited the file occasionally, they didn’t treat the file with any special importance.

20,000 Domain Study

A hosting provider managing over 20,000 domains provided perhaps the most comprehensive real-world data. They reported that no mainstream AI agents or bots are accessing LLMs.txt files, except for niche bots like BuiltWith.

Why llms.txt Failed: The Fundamental Flaws

1. The Trust Problem

Mueller’s comparison to the keywords meta tag cuts to the heart of the issue. His argument: “Why trust it when you can verify the site directly?”

AI platforms have learned from search engine history—when you let site owners self-report what’s important, they lie. The keywords meta tag became so abused with spam that Google abandoned it entirely.

2. Technical Redundancy

Mueller pointed out: “If bots already download full web pages and structured data, why should they rely on a separate file?”

AI crawlers are sophisticated enough to parse HTML, extract meaning, and understand site structure. A separate markdown file adds complexity without solving a real problem.

3. Cloaking Risk

LLMs.txt could be abused by showing AI bots one version of content and everyone else another—essentially a backdoor for cloaking.

This security concern alone makes major platforms hesitant to adopt any standard that creates separate content paths.

4. User Experience Issues

If AI systems cite LLMs.txt files instead of actual web pages, users might end up on bare text files instead of properly formatted articles, creating a poor user experience.

5. SEO Tool Confusion

Ironically, some SEO tools have made things worse. SEO tool provider Semrush began flagging missing llms.txt files as site issues, despite the protocol’s lack of functional impact.

This created false urgency around an unproven standard, frustrating marketers who questioned the value.

The Winner: Model Context Protocol (MCP)

While llms.txt withers, a different standard has achieved what Howard’s proposal could not: actual adoption.

What Is MCP?

The Model Context Protocol (MCP) is an open standard for connecting AI assistants to the systems where data lives, including content repositories, business tools, and development environments.

Unlike llms.txt’s static file approach, MCP creates secure, two-way connections between AI systems and external data sources, enabling dynamic interaction rather than static content snapshots.

Adoption That Actually Happened

Major Platform Support:

Official adopters include: Claude Desktop, OpenAI, Google DeepMind, Cline, and Cursor AI, with SDKs available in Python, TypeScript, C#, and Java.

The watershed moment came in March 2025 when OpenAI CEO Sam Altman announced that OpenAI will add support for Anthropic’s Model Context Protocol across its products, including the desktop app for ChatGPT.

Ecosystem Growth:

The MCP Registry has close to two thousand entries since its announcement in September, representing 407% growth from the initial batch of servers.

The number of active MCP servers went from just a few experimental ones to thousands within the same timeframe.

Why MCP Succeeded Where llms.txt Failed

1. Solves Real Problems

MCP transforms LLMs from passive readers into active assistants that can interact with tools and data sources in real-time.

2. Industry Backing from Day One

Early adopters like Block and Apollo integrated MCP into their systems, while development tools companies including Zed, Replit, Codeium, and Sourcegraph started working with MCP to enhance their platforms.

3. Open Governance

The success of MCP in the past year is entirely thanks to the broad community that grew around the project – from transports, to security, SDKs, documentation, samples, extensions, and developer tooling.

4. Actual Use Cases

MCP enables AI agents to better retrieve relevant information to further understand the context around a coding task and produce more nuanced and functional code with fewer attempts.

Head-to-Head Comparison: llms.txt vs MCP

| Feature | llms.txt | Model Context Protocol (MCP) |

|---|---|---|

| Launch Date | September 2024 | November 2024 |

| Type | Static markdown file | Dynamic client-server protocol |

| Complexity | Low (just a text file) | High (requires APIs, SDKs) |

| Platform Support | Zero major platforms | OpenAI, Anthropic, Google DeepMind |

| Adoption Rate | 0.3% of top 1,000 sites | 2,000+ servers, thousands of implementations |

| Real-Time Data | No (static snapshot) | Yes (live connections) |

| Security Model | None (text file) | OAuth 2.1, least privilege, PKCE |

| Developer Tools | Basic generators | SDKs in Python, TypeScript, C#, Java |

| Use Case | Content discovery | Full data integration & tool execution |

| Industry Momentum | Dying/stagnant | Explosive growth |

| Google’s Position | Rejected, won’t support | Neutral/watching |

| Best For | Nothing (not adopted) | Enterprise AI integration, coding assistants |

| ROI for SEO | Zero | Indirect (better AI capabilities) |

Winner: MCP, and it’s not even close.

What SEO Professionals Should Do Instead

The Expert Consensus

Based on current evidence and platform behavior, the upside is negligible, the abuse risk is real albeit small, and the main cost is time you could spend on proven levers.

Focus on what actually helps: Improve your LLM visibility through strong SEO, structured data, and mentions from trusted, high-authority sources.

What Actually Drives AI Visibility in 2025

1. Traditional SEO Excellence

Many AI tools pull directly from search results. Rank well in traditional search: many AI tools pull directly from Google or Bing results.

Action Items:

- Optimize title tags and meta descriptions

- Build high-quality backlinks

- Improve site speed and Core Web Vitals

- Create comprehensive, authoritative content

2. Structured Data Implementation

Use structured data: schema markup helps search and AI systems better understand your content.

Priority Schema Types for AI:

Articleschema (with author, datePublished, dateModified)FAQPageschema (direct answers to questions)HowToschema (step-by-step instructions)Organizationschema (establish authority)Personschema (author expertise)

3. Authoritative Citations

Get mentioned by trusted, high-authority sources: Wikipedia, major publications, and academic sites tend to show up often in LLM answers.

Strategies:

- Publish original research

- Get quoted in industry publications

- Contribute to authoritative sources

- Build genuine thought leadership

4. Technical SEO Foundation

Strengthen your technical SEO: Make sure your site is crawlable, uses robots.txt properly, and loads cleanly. If LLMs can’t access it, they won’t use it.

Critical Elements:

- Clean, crawlable site architecture

- Proper robots.txt configuration

- XML sitemaps

- Fast loading times

- Mobile optimization

5. Content Quality & Originality

AI systems increasingly favor content with genuine expertise and unique insights.

What Works:

- First-hand experience and case studies

- Original data and research

- Expert analysis and commentary

- Multimedia content (images, videos, infographics)

- Clear, quotable answers to common questions

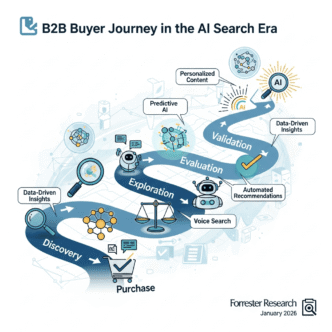

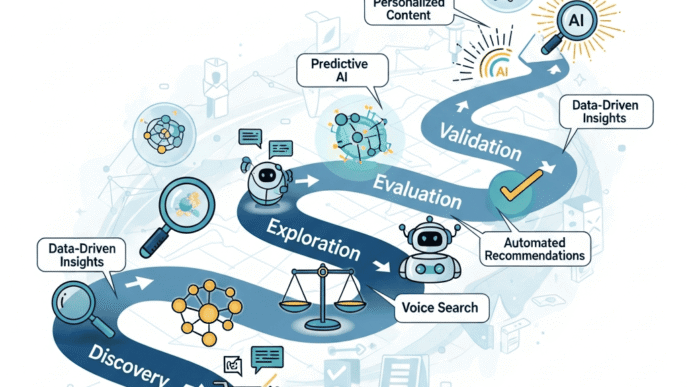

GEO: The Real Future of AI Optimization

Forget llms.txt—the industry is moving toward Generative Engine Optimization (GEO), which focuses on optimizing content specifically for AI-generated responses.

GEO Best Practices:

1. Quotable Content

- Write clear, concise statements

- Use specific statistics and data points

- Create memorable frameworks and concepts

2. Citation-Worthy Structure

- Lead with direct answers

- Support claims with sources

- Use proper attribution

3. Context-Rich Content

- Explain not just “what” but “why”

- Provide background and context

- Address related questions

4. Multimedia Integration

- Original images and graphics

- Data visualizations

- Video explanations

- Infographics

5. E-E-A-T Signals

- Demonstrate Experience (first-hand knowledge)

- Show Expertise (credentials, depth)

- Build Authoritativeness (citations, mentions)

- Establish Trust (transparency, accuracy)

Should You Still Create llms.txt?

The Case FOR (Weak Arguments)

1. Minimal Time Investment Takes only 5-10 minutes to create a basic file.

2. Future-Proofing? Maybe platforms will eventually adopt it… but all evidence suggests they won’t.

3. PR Signal Shows you’re “AI-conscious” for marketing purposes.

The Case AGAINST (Strong Arguments)

1. Zero Platforms Using It No major LLM provider currently supports llms.txt. Not OpenAI. Not Anthropic. Not Google.

2. Indexation Issues Sites might link to it and it could otherwise become indexed, which would be weird for users.

Google’s recommendation if you do create it: Using noindex for it could make sense, as sites might link to it and it could otherwise become indexed.

3. Opportunity Cost Every minute spent on llms.txt is a minute not spent on strategies that actually work.

4. Industry Momentum Against It By late 2025 or early 2026, it may become clear this experiment failed.

Our Recommendation: Skip It

For established sites: Don’t waste time on llms.txt. Focus on proven SEO and content strategies.

For new sites: Definitely don’t create it. You need strategies with proven ROI.

For enterprise sites: If you have engineering resources, explore MCP for actual AI integration needs—but only if you have specific use cases that require real-time data connections to AI systems.

The Broader Lesson: AI Hype vs. AI Reality

The llms.txt saga offers valuable lessons for SEO professionals navigating the AI era:

1. Wait for Platform Confirmation

Don’t implement new “standards” until major platforms officially support them. The SEO industry has a history of chasing shiny objects that never materialize.

2. Focus on Fundamentals

No major AI search system has confirmed LLMs.txt support. Expect zero lift in rankings, citations, or traffic until a platform publicly documents usage.

Meanwhile, traditional SEO best practices—quality content, structured data, authoritative backlinks—continue to work.

3. Beware Tool-Driven Hype

Tool incentives created a presence-not-importance loop. Some audits flag missing LLMs.txt as a “risk,” which reinforces perceived necessity without platform confirmation.

Always verify whether a “best practice” is actually proven or just marketing from tool vendors.

4. Measure Everything

Track AI answer share of voice: pick 50-100 target queries, log weekly presence, citation rates, and landing pages in Bing Copilot, Perplexity, and Google’s AI experiences.

If you can’t measure it, you can’t manage it.

What’s Next for AI & SEO?

Short-Term (2025-2026)

- AI Overviews expansion: Google continues rolling out AI-generated answers

- Citation transparency: Pressure on AI platforms to show sources

- Content authenticity: Increased value on genuine human expertise

- GEO maturation: Industry develops proven AI optimization strategies

Medium-Term (2026-2027)

- MCP ecosystem growth: More enterprise adoption for AI integrations

- New optimization signals: AI platforms reveal what influences their outputs

- Attribution models: Better understanding of AI traffic vs. traditional search

- Quality over quantity: AI rewards depth and expertise, punishes thin content

Long-Term (2027+)

- Agent-based search: AI assistants become primary interface for information

- Direct partnerships: Publishers negotiate directly with AI platforms

- New monetization: Business models evolve beyond traditional ads

- Content verification: Systems to prove human authorship and expertise

Final Verdict: The Brutal Truth

llms.txt is dead—or more accurately, it was never truly alive.

After 15 months since its September 2024 launch:

- ❌ Zero major AI platforms use it

- ❌ 0.3% adoption among top sites

- ❌ Google explicitly rejected it

- ❌ Real-world tests show zero benefit

- ❌ Industry moved to MCP for actual AI integration

Meanwhile, MCP has won the battle for AI integration standardization:

- ✅ Official support from OpenAI, Anthropic, Google DeepMind

- ✅ 2,000+ servers in the registry

- ✅ Thousands of active implementations

- ✅ Real use cases driving real value

- ✅ Strong community and governance

For SEO professionals, the message is clear: ignore llms.txt entirely and focus on:

- Traditional SEO excellence

- Structured data implementation

- Building genuine authority and citations

- Creating expert, experience-driven content

- Measuring AI visibility across platforms

The AI optimization game isn’t won with .txt files—it’s won by being genuinely excellent at what you do, demonstrating real expertise, and earning citations from authoritative sources.

Save your time. Build your authority. Let llms.txt die quietly.

Key Takeaways

✅ DO THIS:

- Implement comprehensive structured data (Schema.org)

- Build topical authority through pillar-cluster content

- Get cited by high-authority sources

- Create quotable, data-rich content

- Track AI visibility across platforms (Perplexity, ChatGPT, Claude)

- Focus on E-E-A-T signals

- Optimize for traditional search (AI pulls from search results)

❌ DON’T WASTE TIME ON:

- Creating llms.txt files (nobody uses them)

- Following unproven “AI optimization” tactics

- Implementing standards without platform support

- Trusting SEO tool warnings about llms.txt

- Chasing every new AI trend without validation

🎯 WATCH CLOSELY:

- MCP adoption (if building API-driven products)

- Official AI platform guidelines when released

- GEO best practices as they emerge

- AI search market share growth