Ever searched for “can you get medicine for someone pharmacy” and gotten results about giving medicine to someone instead of picking up their prescription? Before October 2019, Google struggled with these nuanced queries too.

Then came BERT algorithm—and suddenly, Google understood context like never before.

If you’re a blogger scratching your head wondering why your perfectly keyword-optimized content stopped ranking, or why that conversational piece you wrote on a whim is suddenly crushing it, BERT is probably the reason.

Here’s everything you need to know about Google BERT, why it matters more than you think, and how to optimize without losing your mind (or your natural writing voice).

Table of Contents

ToggleWhat Is the BERT Algorithm? (The Non-Technical Explanation)

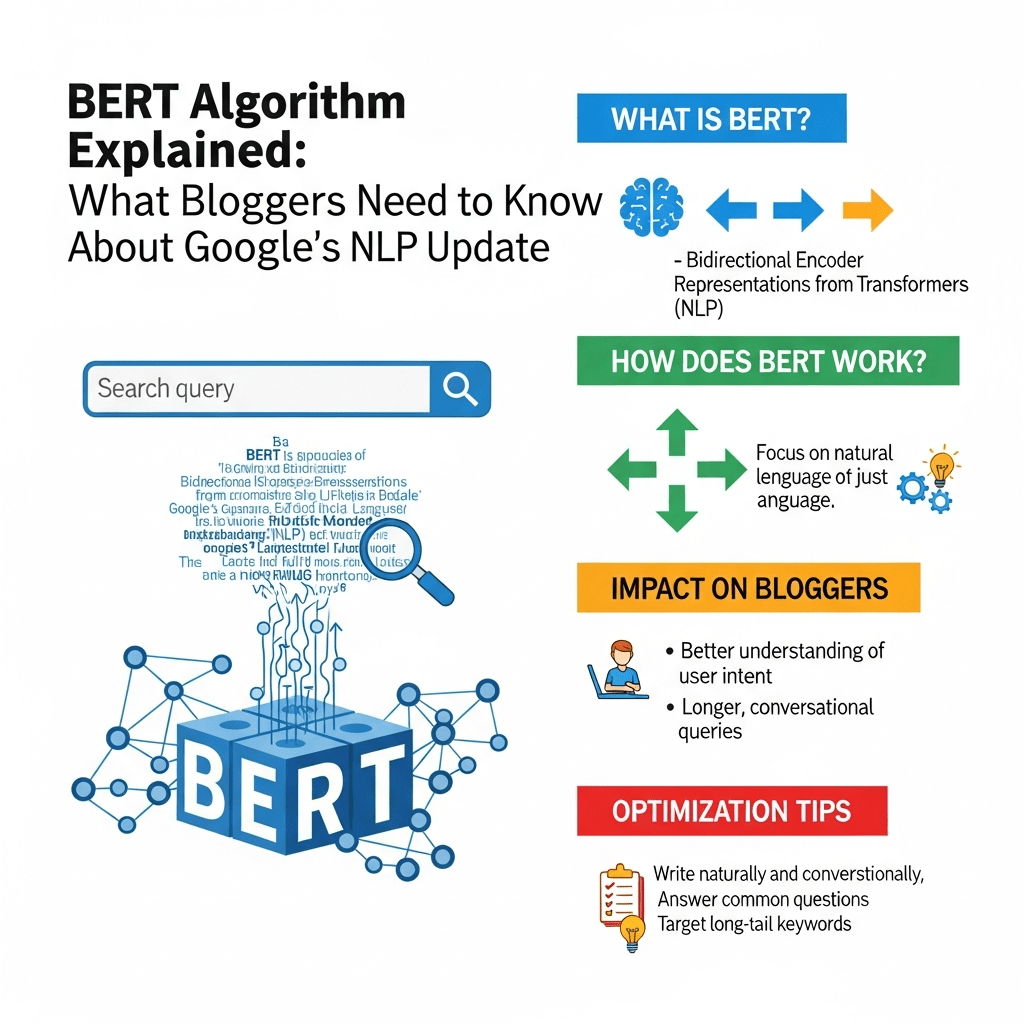

BERT stands for Bidirectional Encoder Representations from Transformers. But let’s be real—that technical jargon doesn’t help anyone.

Here’s what you actually need to know: BERT algorithm is Google’s neural network-based technique for natural language processing that helps search engines understand the full context of words in a sentence by looking at all surrounding words simultaneously—both before and after each word.

Think of it like this: Old Google read sentences one word at a time, left to right, like a robot learning English. BERT NLP reads the entire sentence at once, understanding how every word relates to every other word—just like a human does.

The Famous Example Everyone Uses:

Query: “2019 brazil traveler to usa need a visa”

Pre-BERT: Google focused on “Brazil” + “USA” + “visa” and showed results about Americans traveling to Brazil.

Post-BERT: Google understands “traveler to USA” means the direction matters. It shows results about Brazilians traveling to America.

That tiny word “to” completely changed the meaning. Pre-BERT Google missed it. BERT algorithm caught it instantly.

Pro Tip: BERT affected 1 in 10 searches when it launched—making it one of the biggest search updates in five years. If you saw ranking changes in late 2019 or early 2020, BERT was likely involved.

For bloggers, this means one critical thing: Context is king. Not keywords. Not word count. Not backlinks. Context and natural language that actually answers questions.

How Does Google BERT Actually Work? (Without the Math)

Let’s break down how BERT changed SEO forever without drowning in technical details.

The Bidirectional Magic

The “bidirectional” part is what makes BERT special. Traditional language models read text in one direction:

Old way (Left to right): “The bank… [what comes next?]… of the river”

- Prediction: Probably “bank” means financial institution or riverbank, decided before reading “river”

BERT way (Both directions simultaneously): “The… bank… of… the… river”

- Understanding: Reads “river” and “bank” together, instantly knows it’s a riverbank, not a Wells Fargo

This bidirectional reading lets BERT NLP understand that:

- “Parking on a hill” ≠ “parking fine” (one’s about a slope, one’s about being okay)

- “Do you need a reservation” has different meanings for restaurants vs. Native American discussions

- “Right” could mean correct, direction, or entitlement—BERT uses context to decide

The Transformer Architecture (Simplified)

BERT uses something called “transformers” (no, not the robots). Think of transformers as attention mechanisms that weigh how important each word is to every other word in a sentence.

Example: “The animal didn’t cross the street because it was too tired.”

BERT’s attention mechanism asks:

- What does “it” refer to?

- Weighs “animal” vs. “street”

- Considers “tired” (animals get tired, streets don’t)

- Conclusion: “it” = animal

Pre-BERT systems struggled with this. BERT algorithm handles it naturally by processing all relationships simultaneously.

Pre-training + Fine-tuning (Why BERT Is So Smart)

BERT was trained on massive text datasets (all of Wikipedia plus 11,000+ books) to understand language patterns before being fine-tuned for search.

Pre-training phase:

- Read billions of sentences

- Learn grammar, syntax, relationships

- Understand contextual word meanings

- Build comprehensive language model

Fine-tuning for search:

- Applied to query understanding

- Adapted for search result relevance

- Optimized for user satisfaction

- Integrated with ranking systems

This two-phase approach is why Google BERT understands language nuance so well—it learned how humans actually communicate before learning how to apply that to search.

For practical BERT optimization, this means: Write like a human explaining something to another human. BERT was trained on natural language, so natural language is what it rewards.

BERT vs RankBrain: Understanding the Difference

People constantly confuse these two AI systems. Let’s clear this up once and for all.

What RankBrain Does

RankBrain (launched 2015):

- Primary job: Interprets query intent and measures user satisfaction

- How it works: Machine learning based on user behavior patterns

- Strength: Handling ambiguous or never-seen-before queries

- Focus: Which results satisfy users best?

What BERT Does

BERT (launched 2019):

- Primary job: Understands the contextual meaning of words in queries and content

- How it works: Deep learning neural networks analyzing word relationships

- Strength: Understanding nuanced, conversational, and complex language

- Focus: What does this query/content actually mean?

How They Work Together

Think of them as teammates, not competitors:

User searches: “can you get a prescription filled for someone else”

- BERT processes language: Understands “for someone else” is the critical context distinguishing this from getting your own prescription

- RankBrain matches intent: Recognizes this is informational (needs pharmacy policy info, not prescription services)

- Together they rank: Pages that both understand the language nuance AND satisfy the informational intent

The Key Difference for Bloggers

RankBrain asks: “Does this content satisfy users who searched this?” BERT asks: “Does this content actually understand what the search means?

Practical implication: RankBrain rewards engagement signals (dwell time, CTR). BERT rewards accurate, contextually-appropriate content that demonstrates genuine understanding of the query’s nuance.

You can’t optimize for one without the other. For the complete picture on how these systems integrate, check out understanding AI and machine learning in modern SEO.

BERT Update Impact: What Actually Changed for Bloggers

When BERT algorithm rolled out in October 2019, it fundamentally changed which content ranks. Here’s what happened.

The Winners: Conversational, Natural Content

Content that suddenly ranked better:

- Long-tail conversational queries: “What should I do if my cat won’t stop meowing at night” (natural question phrasing)

- Nuanced distinction content: Articles explaining subtle differences (“affect vs effect explained”)

- Context-dependent topics: Content where small words matter (“traveling to” vs “traveling from”)

- Question-answering format: Direct, natural answers to specific questions

- Natural language: Content written conversationally, not keyword-stuffed

Real example from Search Engine Journal:

A health blog saw their article “how to know if you need to see a doctor for a cough” jump from position 15 to position 3 after BERT. Why? It naturally used conversational language patterns that matched how people actually phrase health questions.

The Losers: Keyword-Stuffed, Unnatural Content

Content that dropped:

- Keyword-focused listicles: Pages targeting exact-match phrases without natural context

- Thin content: Surface-level articles that mentioned keywords but didn’t genuinely explain topics

- Awkwardly written pages: Content written “for SEO” with unnatural phrasing

- Preposition-ignorant content: Pages that didn’t account for contextual nuances

- Jargon-heavy technical writing: Content that didn’t explain things naturally

Real impact data:

According to SEMrush analysis of BERT’s initial rollout:

- ~30% of long-tail queries saw ranking changes

- Voice search results saw particularly dramatic shifts (60%+ of voice queries affected)

- Question-based queries experienced the most improvement in result quality

- Featured snippets changed for ~40% of queries with conversational phrasing

The Surprising Truth: Most Sites Saw Little Change

Here’s what most SEO “experts” won’t tell you: If you were already writing naturally for humans, BERT update impact was minimal for you.

BERT helped:

- Searchers get better results (primary goal achieved)

- Well-written, genuinely helpful content rank better

- Conversational content compete with keyword-stuffed pages

BERT hurt:

- Low-quality content that manipulated rankings through exact-match keywords

- Content that didn’t actually understand query nuance

- Pages written “for Google” rather than humans

Pro Tip: If BERT devastated your rankings, it’s a sign your content wasn’t genuinely helpful—it was gaming the system. The fix isn’t to “optimize for BERT”—it’s to create actually valuable content.

Optimizing Content for BERT Algorithm: The Right Way

Here’s the good news: You can’t really “optimize” for BERT algorithm in the traditional SEO sense. And that’s actually great news for bloggers who care about quality.

Strategy #1: Write Conversationally (Like You’re Talking to a Friend)

BERT natural language processing rewards natural communication. Write how you’d explain something over coffee, not how a textbook sounds.

❌ Bad (Pre-BERT style): “SEO optimization strategies for websites involve keyword research, on-page optimization, and link building. Effective SEO optimization requires understanding search engine algorithms. SEO optimization best practices include content optimization and technical SEO optimization.

✅ Good (BERT-friendly): “Want to improve your SEO? Start by understanding what your audience actually searches for. Then create content that genuinely answers their questions. Add some technical polish, build relationships for natural links, and watch your rankings improve.

See the difference? The second version uses natural contractions, asks questions, speaks directly to the reader, and varies sentence structure. That’s what BERT understands and rewards.

Strategy #2: Focus on Context and Nuance

Small words matter to BERT. Prepositions, articles, and conjunctions provide crucial context.

Examples where context changes everything:

- “How to fix a leaky faucet” vs “How much to fix a leaky faucet” (DIY tutorial vs. cost information)

- “Best phone for seniors” vs “Best phone from seniors” (senior-friendly vs. hand-me-downs)

- “Working from home tips” vs “Working at home tips” (remote work vs. home office setup)

Your content must acknowledge these nuances. Don’t just stuff in keywords—genuinely address the specific question being asked.

Strategy #3: Answer Complete Questions Naturally

Structure your content to answer the actual questions people ask, using natural question phrasing in headers.

Question-Based Header Examples:

Instead of: “Password Reset Process” Use: “How Do I Reset My Password If I Forgot It?”

Instead of: “Plant Watering Frequency” Use: “How Often Should You Water Indoor Plants?”

Instead of: “Dog Training Timeline” Use: “How Long Does It Take to Potty Train a Puppy?”

Why this works for BERT: These headers match conversational voice queries and demonstrate you understand the actual question being asked—not just the topic keywords.

Strategy #4: Use Natural Language Variations

BERT algorithm understands synonyms and related concepts. You don’t need to repeat exact keywords—just cover the topic thoroughly.

Example approach for “how to lose weight”:

Instead of repeating “lose weight” 50 times, naturally vary your language:

- Shed pounds

- Drop those extra kilograms

- Trim down

- Reduce body fat

- Reach your goal weight

- Get in shape

BERT understands these all relate to the same concept. Natural variation actually helps—it signals comprehensive coverage rather than keyword manipulation.

Strategy #5: Provide Contextual Depth

BERT rewards content that demonstrates genuine understanding of a topic’s nuances, not just surface-level keyword coverage.

Shallow approach: “Yoga is good for flexibility. Do yoga to improve flexibility. Yoga flexibility benefits include better flexibility.”

Contextual depth approach: “Yoga improves flexibility through progressive stretching and joint mobilization. Different styles offer varying benefits: Yin yoga focuses on deep connective tissue flexibility, while Vinyasa builds dynamic range of motion. Expect to see noticeable improvements within 4-6 weeks of consistent practice.”

The second example shows understanding of the topic’s nuances—exactly what writing for BERT natural language processing means.

Strategy #6: Don’t Overthink It (Seriously)

The best BERT optimization advice? Stop trying to optimize for BERT.

BERT rewards:

- Natural writing

- Genuine expertise

- Contextual understanding

- Conversational tone

- Complete answers

You achieve all of this by simply being a good blogger who cares about helping readers. If you’re obsessing over “optimizing for BERT,” you’re missing the point.

Write great content that genuinely helps people, and BERT will reward you automatically.

For building comprehensive content that satisfies both BERT and user needs, explore creating topic authority in the age of AI search.

Common BERT Mistakes Bloggers Make (And How to Fix Them)

Even knowing about BERT algorithm, bloggers still mess this up. Here are the most common mistakes.

Mistake #1: Trying to “Hack” BERT

The attempt: Bloggers try to stuff conversational keywords or manufacture natural-sounding content that’s actually robotic.

Example of fake natural: “You might be wondering, how can I improve my SEO? Well, let me tell you, improving SEO requires understanding algorithms. Speaking of algorithms, BERT algorithm is important. What is BERT algorithm, you ask?”

Why it fails: BERT detects unnatural patterns. This forced conversational style screams “trying too hard” and lacks genuine helpfulness.

The fix: Actually write to help someone. Forget BERT exists. Explain your topic like you’re helping a friend solve a problem.

Mistake #2: Ignoring the Complete Question

The attempt: Target keywords without understanding query nuance.

Example: Query: “Can you bring food through airport security?” Content focuses on: General airport rules about luggage

Why it fails: BERT NLP understands “through security” is the critical context. Content about general luggage rules doesn’t address the specific security checkpoint question.

The fix: Analyze the complete query. What’s the user really asking? Address that specific concern, not just the topic keywords.

Mistake #3: Writing for Keywords Instead of Context

The problematic approach: “Best laptops best laptops for students include budget best laptops and premium best laptops. Best laptops for college students need good battery life.”

Why it’s terrible: Even if you make it grammatically correct, focusing on keyword repetition over contextual information fails BERT’s understanding test.

The right approach: “College students need laptops that balance performance, portability, and price. Look for models with 8+ hour battery life for all-day classes, lightweight designs for easy carrying, and enough processing power for research, papers, and occasional streaming.”

See how the second version provides context, reasons, and specific criteria? That’s what BERT rewards.

Mistake #4: Oversimplifying to the Point of Uselessness

The misconception: “BERT likes simple language, so I’ll make everything super basic.”

Bad implementation: “Dogs are pets. Dogs need food. Feed your dog. Dogs like walks. Walk your dog.”

Why it fails: Simple ≠ simplistic. BERT wants clear communication with appropriate depth, not elementary-school-level writing.

The balance: “Dogs thrive on routine. Feed your dog twice daily at consistent times—this regulates digestion and prevents begging. Most dogs need 30-60 minutes of daily exercise, adjusted for age, breed, and health status.”

Clear, conversational, yet substantial. That’s the sweet spot.

Mistake #5: Forgetting About Long-Tail Conversational Queries

The oversight: Targeting only short, obvious keywords while ignoring specific long-tail questions.

What they miss:

- “How long after eating should I wait to walk my dog”

- “Can I give my dog peanut butter every day”

- “What should I do if my dog ate chocolate but seems fine”

Why this matters: BERT algorithm impact on search results was most dramatic for these longer, more specific, conversational queries. They’re often easier to rank for and convert better because intent is crystal clear.

The opportunity: Create content specifically answering these detailed questions using natural question phrasing.

Mistake #6: Prioritizing Length Over Quality

The flawed logic: “More words = more context = BERT loves it”

The reality: A rambling 3000-word article that takes forever to answer the question frustrates users—and BERT sees those negative engagement signals.

Better approach: Answer the question directly and quickly, then provide optional depth for those who want more detail. Use clear sections so users can easily find relevant information.

Pro Tip: Use Google’s “People Also Ask” boxes to identify related contextual questions your content should address. These are goldmines for understanding what BERT considers contextually related to your topic.

BERT and Featured Snippets: The Connection You Need to Understand

BERT algorithm dramatically changed featured snippet selection. Here’s how to leverage this.

Why BERT Loves Snippets (And Snippets Love BERT)

Featured snippets require clear, direct answers—exactly what BERT NLP helps Google identify.

The connection:

- BERT understands which content directly answers the question

- Featured snippets reward clear, well-structured answers

- Together, they favor content that gets straight to the point

Impact data: After BERT’s rollout, Google reported that snippet quality improved significantly for conversational and long-tail queries. Snippets became more accurate and relevant.

How to Structure Content for BERT-Powered Snippets

The Direct Answer Formula:

- Use the question as your H2 header (natural language phrasing)

- Answer in the first 40-60 words of that section (paragraph format)

- Provide supporting details below the direct answer

- Use formatting (lists, tables, steps) when appropriate

Example Structure:

H2: How Long Does It Take for SEO to Work?

Most websites see significant SEO results within 4-6 months of consistent optimization. New sites or highly competitive niches may take 6-12 months, while established sites in less competitive spaces might see improvements in 2-3 months. The timeline depends on your starting point, competition level, and optimization quality.”

[Additional details follow…]

Why this works: BERT identifies this as a direct answer to the question. The natural language structure makes it snippet-worthy.

Question Types That Benefit Most from BERT

Certain query types saw the biggest snippet improvements post-BERT:

High-impact question types:

- How-to with specific conditions: “How to remove red wine stains from carpet”

- Time-based questions: “When should I plant tomatoes in zone 7”

- Comparison questions: “What’s the difference between jam and jelly”

- Troubleshooting questions: “Why won’t my iPhone charge past 80%”

- Permission/possibility questions: “Can you bring a lighter on a plane”

Optimization approach: Create dedicated sections for each specific question variant, using natural question phrasing in headers.

The Voice Search Connection

BERT update impact is especially pronounced for voice search because voice queries are naturally conversational—BERT’s sweet spot.

Voice query characteristics:

- Longer (7-15 words vs. 2-4 words typed)

- Question-based (“Hey Google, what’s the best…”)

- Conversational (“How do I…” not “iPhone charging fix”)

- Context-dependent (“near me,” “open now,” “for my situation”)

Optimization strategy:

- Write content that sounds natural when read aloud

- Answer specific questions directly

- Use conversational language throughout

- Structure for featured snippets (voice assistants pull from these)

According to a 2024 report from BrightEdge, 58% of voice search results come from featured snippets—and BERT is the engine making that snippet selection more accurate.

For more on voice optimization in the BERT era, see conversational AI and modern search strategies.

Real Examples: Content That Wins With BERT vs. Content That Loses

Let’s look at actual content examples to see what is Google BERT algorithm explained through real-world applications.

Example 1: Health Query

Query: “can you take ibuprofen with tylenol”

Content that loses (Pre-BERT winner):

- Title: “Ibuprofen and Tylenol Information”

- Includes both keywords but dances around the direct answer

- Talks about each medication separately

- Buried answer: “Consult your doctor”

- Written like a medical disclaimer, not a helpful guide

Content that wins (BERT-optimized):

- Title: “Can You Take Ibuprofen and Tylenol Together?”

- First paragraph: “Yes, you can safely take ibuprofen and Tylenol (acetaminophen) together. They work through different mechanisms and don’t negatively interact. Many doctors recommend alternating them for pain management.”

- Follows with specific dosing, timing, and safety information

- Written conversationally but accurately

- Includes medical sourcing and warnings

Why BERT prefers the winner: Direct answer to the specific question, natural language, contextual understanding that “with” means “at the same time” not just “information about both.”

Example 2: Travel Query

Query: “do you need a passport to go to puerto rico from the united states”

Content that loses:

- Focuses on “Puerto Rico passport requirements” generically

- Mentions passport information for international travelers

- Doesn’t directly address the domestic travel nuance

- Keyword-stuffed with “Puerto Rico” and “passport”

Content that wins:

- Directly states: “No, U.S. citizens don’t need a passport to visit Puerto Rico because it’s a U.S. territory. Treat it like traveling to any U.S. state—a driver’s license or state ID is sufficient for the flight.”

- Explains the common confusion

- Addresses related concerns (what non-citizens need)

- Natural, conversational explanation

BERT’s advantage: Understands “from the United States” is critical context. The answer for U.S. citizens vs. international visitors differs completely.

Example 3: Technical Query

Query: “how to add column in excel between two columns”

Content that loses:

- Title: “Excel Column Management Tutorial”

- Generic instructions about adding columns

- Doesn’t specifically address “between” existing columns

- Assumes reader knows where to click

Content that wins:

- Title: “How to Insert a Column Between Existing Columns in Excel”

- “To add a column between two existing columns: Right-click the column header where you want the new column to appear, select ‘Insert’ from the menu. Excel will insert a new column to the left of your selected column.”

- Includes screenshots showing exactly this scenario

- Addresses common confusion about left vs. right insertion

- Provides keyboard shortcut alternative

BERT’s recognition: “Between two columns” is specific—not just “add a column.” The winning content understands this nuance and addresses it explicitly.

Example 4: Shopping Query

Query: “best laptop for video editing under $1000”

Content that loses:

- Generic laptop roundup with various prices

- Focuses on “best laptops” broadly

- Video editing mentioned as one of many uses

- Includes models above $1000

- No clear winner for the specific criteria

Content that wins:

- Title matches query exactly

- Immediately clarifies: “For video editing under $1000, prioritize RAM (16GB minimum), dedicated GPU, and fast storage. Here are the top 5 models that meet these criteria…”

- Every recommendation is under $1000

- Explains why each choice works for video editing specifically

- Includes performance benchmarks for video rendering

BERT’s understanding: “For video editing” and “under $1000” are constraints that completely change which laptops are appropriate. Generic lists don’t satisfy this specific need.

The Pattern: Specificity + Context + Natural Language

Winning content consistently:

- Addresses the complete query, including contextual nuances

- Provides direct answers before elaborating

- Uses natural, conversational language

- Demonstrates genuine understanding of what’s being asked

- Anticipates related questions and addresses them

Losing content typically:

- Targets broad keywords without addressing specific context

- Buries answers or never provides them directly

- Uses stilted, keyword-focused writing

- Ignores critical contextual words (prepositions, qualifiers)

- Treats similar queries as interchangeable

This is optimizing content for BERT algorithm in action—not through tricks or hacks, but through genuinely helpful, contextually-appropriate content.

BERT for International and Multilingual Content

Here’s something most English-focused bloggers miss: BERT algorithm rolled out globally, transforming search in 70+ languages.

Why This Matters Even for English Bloggers

If you have any international audience or compete with international content, BERT’s multilingual capabilities affect you.

BERT’s multilingual understanding:

- Launched initially for English (October 2019)

- Expanded to 70+ languages (December 2019)

- Understands context across all supported languages

- Handles code-switching (mixing languages) remarkably well

Practical implications:

- International competition increased: High-quality content in other languages now competes more effectively

- Translation quality matters more: Machine-translated content that doesn’t maintain contextual accuracy performs poorly

- Cultural context recognition: BERT understands regional language variations and cultural nuances

Language-Specific Optimization Tips

For multilingual sites:

- Don’t rely on automatic translation: BERT detects unnatural language patterns regardless of language

- Hire native speakers: Contextual nuance requires native-level fluency

- Consider regional variations: British English vs. American English, Latin American Spanish vs. Spain Spanish

- Maintain natural phrasing: Each language has its own conversational style—respect that

For English content targeting international audiences:

- Be aware of regional differences: “Trainers” (UK) vs. “sneakers” (US)

- Avoid idioms that don’t translate: BERT understands them, but international readers might not

- Use clear, accessible language: Especially important when readers aren’t native speakers

- Consider search behavior differences: Question phrasing varies by culture

Pro Tip: Use Google Search Console’s query reports filtered by country to see what your international audience actually searches for. The phrasing often differs from what you’d expect.

Measuring BERT’s Impact on Your Content

You can’t see a “BERT score” in analytics, but you can measure the signals that indicate BERT is working for (or against) you.

Metrics That Indicate BERT Success

1. Long-Tail Query Growth

Check Google Search Console for:

- Increase in 5+ word queries driving traffic

- Growth in question-based queries (“how to,” “what is,” “can you”)

- More conversational query patterns

BERT success signal: Rising traffic from specific, conversational long-tail queries means BERT is matching your content to nuanced searches.

2. Featured Snippet Wins

Track:

- Number of queries where you hold featured snippets

- Particularly for question-based queries

- Snippet impressions and clicks

Why this matters: BERT dramatically improved snippet selection quality. Winning snippets indicates BERT recognizes your content as directly answering questions.

3. Improved Position for Specific Queries

Look for:

- Rankings improving for very specific queries (with prepositions, qualifiers)

- Better performance on “near me” and location-specific searches

- Rising positions for queries with implied context

The connection: These are exactly the query types BERT handles best.

4. Voice Search Traffic Indicators

Monitor:

- Mobile organic traffic (voice is primarily mobile)

- Average query length increasing

- Question-based query traffic growing

- Featured snippet traffic (voice pulls from snippets)

BERT + Voice: Since BERT powers much of voice search understanding, voice traffic growth suggests BERT is working for you.

Warning Signs BERT Isn’t Working For You

Red flags:

- Short-tail keywords rank, long-tail keywords don’t: Suggests your content isn’t contextually appropriate for specific queries

- High impressions, low CTR: Your snippet doesn’t accurately represent content (BERT mismatch)

- Traffic from irrelevant queries: Content matches keywords but not actual intent

- Featured snippet loss: If you lost snippets after October 2019, BERT likely identified better matches

Diagnostic questions:

- Does your content directly answer the specific question being asked?

- Is your writing natural and conversational?

- Do you acknowledge contextual nuances (prepositions, qualifiers)?

- Would a voice assistant confidently read your answer?

If you’re answering “no” to these, BERT isn’t working optimally for your content.

Tools to Help Evaluate BERT Performance

Free tools:

- Google Search Console: Query reports, search appearance, performance by query type

- Google’s “People Also Ask”: Shows contextually related questions

- AnswerThePublic: Identifies natural question phrasings

- AlsoAsked: Maps question relationships (how BERT sees connections)

Paid tools:

- Semrush: Intent analysis, question-based keyword tracking

- Ahrefs: Content gap analysis, featured snippet opportunities

- Clearscope / Surfer SEO: Content optimization for natural language and comprehensiveness

For comprehensive tracking strategies, see measuring AI-powered search performance.

The Future: BERT’s Evolution and What’s Next

BERT algorithm isn’t static. Understanding where it’s headed helps you future-proof your content.

From BERT to MUM: The Natural Progression

Google’s MUM (Multitask Unified Model), launched in 2021, builds on BERT’s foundation:

What MUM adds:

- Multimodal understanding: Analyzes text, images, and video together

- Cross-language capabilities: Transfers knowledge across 75+ languages

- Complex query handling: Answers multi-step questions requiring synthesis

- Knowledge transfer: Applies expertise from one domain to related topics

Example of MUM’s power:

Query: “I’ve hiked Mt. Fuji. How should I train differently for Mt. Kilimanjaro?”

BERT understands: The language, the comparison, the training focus

MUM does more: Analyzes hiking requirements of both mountains, understands altitude differences, suggests training adjustments based on specific mountain characteristics, pulls from resources across languages

For bloggers: The trend is clear—create comprehensive content ecosystems (text + visuals + video) that thoroughly cover topics. Single-format, surface-level content will increasingly struggle.

Continued Refinement of Natural Language Understanding

Ongoing improvements:

- Better understanding of implied context: What’s not said but understood

- Emotional tone recognition: Understanding sentiment and urgency

- Regional and cultural nuance: More sophisticated handling of dialects and cultural references

- Real-time learning: Faster adaptation to language evolution and new terminology

What this means for you:

- Natural writing becomes even more important

- Authenticity and expertise signals matter more

- Content must demonstrate genuine understanding, not just keyword coverage

- The gap between high-quality and low-quality content will widen

The Convergence: AI Search Assistants

As chatbots and AI search assistants (ChatGPT search, Perplexity, Google SGE) become mainstream, BERT NLP principles apply even more strongly:

These systems prioritize:

- Clear, direct answers

- Natural language

- Contextual appropriateness

- Comprehensive coverage

- Genuine expertise

Blogger strategy: Content optimized for BERT is naturally well-positioned for AI search assistants. You’re already doing the right things.

Preparing for What’s Next

Future-proof content principles:

- Write conversationally: Will always serve you well as NLP improves

- Demonstrate expertise: AI can’t fake genuine knowledge

- Cover topics comprehensively: Depth beats breadth

- Use multimedia: Text + images + video creates resilience

- Answer specific questions: The more specific, the more valuable

- Build topic authority: Comprehensive coverage of your niche

The constant: Regardless of algorithm updates, genuinely helpful content that serves readers will win. BERT accelerated this trend; future updates will continue it.

Final Thoughts: BERT Made Search More Human

Here’s the beautiful irony of BERT algorithm explained: The more “advanced” and “AI-powered” Google becomes, the more it rewards simple, human, helpful content.

BERT didn’t make SEO harder—it made gaming SEO harder while making good content creation easier.

The BERT mindset shift:

❌ Old thinking: “How do I optimize this for search engines?” ✅ New thinking: “How do I best help someone asking this question?”

❌ Old approach: Keyword research → keyword placement → optimization tricks ✅ New approach: Question research → comprehensive answer → natural writing

❌ Old measure: Rankings, traffic, keyword density ✅ New measure: User satisfaction, engagement, answer quality

The bottom line: Stop trying to trick algorithms. Start helping humans. BERT rewards the latter automatically.

For bloggers, this is fantastic news. You don’t need to become an SEO expert. You need to become a great communicator who genuinely understands your audience’s questions and provides clear, helpful answers in natural language.

Do that consistently, and Google BERT becomes your ally, not your obstacle.

Want to build a comprehensive content strategy that works with BERT and beyond? Check out creating AI-proof content that ranks and understanding natural language processing in modern SEO.

Frequently Asked Questions (FAQs)

Q: What is BERT in simple terms? BERT is Google’s AI system that helps search engines understand the full context of words in a sentence by looking at all surrounding words simultaneously—both before and after. It’s like giving Google the ability to read like a human instead of a robot, understanding nuance, context, and conversational language.

Q: How do I optimize my content for BERT? You don’t “optimize” for BERT in the traditional sense. Instead, write naturally and conversationally, answer complete questions (including contextual nuances), use natural language variations, and focus on genuinely helping readers. BERT rewards content that sounds human because it was trained on natural human language.

Q: Did BERT replace RankBrain? No. BERT and RankBrain work together. BERT understands the contextual meaning of words in queries and content, while RankBrain interprets query intent and measures user satisfaction. Think of them as complementary systems, not replacements.

Q: When did BERT roll out and did it affect my rankings? BERT launched in English in October 2019 and expanded to 70+ languages by December 2019. It affected about 1 in 10 searches initially—particularly long-tail, conversational, and question-based queries. If you saw ranking changes in late 2019 or early 2020, BERT was likely involved.

Q: Does BERT affect every search query? Yes, BERT is now involved in processing virtually all queries, though its impact varies. It has the most significant effect on longer, more conversational queries with contextual nuance. Short, clear queries see less dramatic impact.

Q: Is BERT the same as Google’s AI Overview/SGE? No. BERT is the underlying natural language understanding technology. AI Overviews (formerly SGE) use multiple AI systems including BERT, MUM, and others to generate comprehensive answers. BERT is one component of the larger AI search ecosystem.

Q: Does keyword research still matter after BERT? Yes, but how you use keywords changed. BERT understands synonyms and context, so you don’t need exact-match keyword repetition. Research helps you understand what people search for and the intent behind queries—but natural language integration matters more than keyword density.

Q: How can I tell if BERT is helping or hurting my content? Check Google Search Console for: (1) Growing traffic from long-tail, conversational queries, (2) Featured snippet wins for question-based searches, (3) Improved rankings for specific, context-dependent queries. These signal BERT is working for you. Declining long-tail traffic suggests your content isn’t contextually appropriate.

Q: Should I rewrite all my old content for BERT? Only if it’s unnatural, keyword-stuffed, or doesn’t genuinely answer questions. If your content is already conversational and helpful, BERT likely already works for it. Prioritize updating pages that: (1) Lost rankings post-2019, (2) Have high impressions but low clicks, (3) Target long-tail keywords but don’t answer complete questions.

Author: Laura G. | AI & SEO Specialist

Making complex search algorithms understandable for content creators who just want to write great stuff and have it rank.

🧠 BERT Algorithm Explained

Understanding Google's Natural Language Processing Revolution

🎯 What is BERT?

Bidirectional Encoder Representations from Transformers - Google's neural network-based technique for natural language processing that understands the full context of words by looking at all surrounding words simultaneously, both before and after.

Left-to-right processing

Missed contextual nuances

Struggled with prepositions

Poor at conversational queries

Bidirectional understanding

Grasps subtle context

Understands word relationships

Excellent with natural language

🔄 Bidirectional

BERT reads text in both directions simultaneously, understanding how every word relates to every other word—not just what comes before or after sequentially.

🎯 Context Master

Understands that "bank" means different things in "river bank" vs "bank account" by analyzing surrounding words and relationships.

💬 Natural Language

Trained on billions of sentences to understand how humans actually communicate, including idioms, prepositions, and conversational patterns.

🌍 Multilingual

Works across 70+ languages, understanding contextual nuances in multiple languages simultaneously with the same underlying principles.

The BERT Processing Flow

% represents how significantly BERT improved understanding for each query type

English Launch

BERT initially rolled out for English queries, affecting about 1 in 10 searches. Focus on longer, more conversational queries where context was critical.

Global Expansion

Expanded to 70+ languages worldwide. Demonstrated BERT's ability to understand contextual nuances across different language structures.

Featured Snippets Integration

BERT applied to featured snippets, dramatically improving answer quality for question-based queries. Snippet accuracy increased significantly.

MUM Introduction

Google introduced MUM (Multitask Unified Model), 1000x more powerful than BERT, building on BERT's transformer architecture foundation.

Real-World BERT Examples

Showed visa requirements for Americans traveling TO Brazil. Focused on "Brazil" + "USA" + "visa" without understanding "to USA" direction.

Correctly showed visa requirements for Brazilians traveling TO the USA. Understood "to" as critical directional context.

Results about giving medicine TO someone or general pharmacy information. Missed "for someone" context.

Pharmacy policies about picking up prescriptions FOR another person. Understood "for someone" means acting on their behalf.

General parking tips, mixed with parking fine information (interpreting "no curb" as "not illegal").

Specific instructions for parking on hills without curbs (wheel positioning, safety measures). Understood physical curb absence.

BERT vs RankBrain: Key Differences

| Aspect | RankBrain (2015) | BERT (2019) |

|---|---|---|

| Primary Function | Interprets query intent & measures satisfaction | Understands contextual meaning of words |

| Technology Type | Machine learning (pattern recognition) | Deep learning (neural networks) |

| Reading Method | Query understanding through patterns | Bidirectional simultaneous reading |

| Main Strength | Handling ambiguous/never-seen queries | Understanding nuanced, conversational language |

| What It Measures | User behavior & satisfaction signals | Contextual word relationships |

| Query Processing | Identifies what users want | Understands what query actually means |

| Training Data | User behavior patterns | Wikipedia + 11,000 books |

| Best For | New/ambiguous queries, intent matching | Complex language, prepositions, context |

| Works Together | ✅ They complement each other - BERT understands meaning, RankBrain measures satisfaction | |

💡 How They Work Together

Example Query: "can you bring food through airport security"

BERT's Role: Understands "through security" is the critical context - user wants to know about TSA checkpoint rules, not general airport food policies.

RankBrain's Role: Identifies this as informational intent, then measures which results best satisfy users asking this specific question.

Together: Content that both understands the nuanced question AND satisfies user needs ranks highest.

How to Optimize for BERT

💬 Write Naturally

Use conversational language like you're explaining to a friend. Avoid keyword stuffing and robotic phrasing. BERT rewards natural communication patterns.

🎯 Address Context

Small words matter. Pay attention to prepositions (to, from, for, at) and qualifiers. They completely change meaning, and BERT understands this.

❓ Answer Questions

Use natural question phrasing in headers. "How Do I...?" not just "Instructions." Provide direct answers in the first 40-60 words.

🔄 Use Variations

Natural synonyms and related terms, not keyword repetition. BERT understands semantic relationships, so comprehensive coverage beats density.

📖 Provide Depth

Demonstrate genuine understanding of nuances, not just surface-level keyword coverage. BERT detects contextual expertise.

🎤 Think Voice Search

Read content aloud. If it sounds unnatural, rewrite it. BERT powers voice search and rewards conversational flow.

% represents content performance in BERT-powered search results

🚫 Common BERT Mistakes to Avoid

1. Trying to "hack" BERT - Forced conversational style that's actually robotic

2. Ignoring complete questions - Targeting keywords without understanding query nuance

3. Keyword repetition - Focusing on density over contextual information

4. Oversimplifying - Making content so basic it loses substance

5. Forgetting long-tail - Missing specific conversational query opportunities

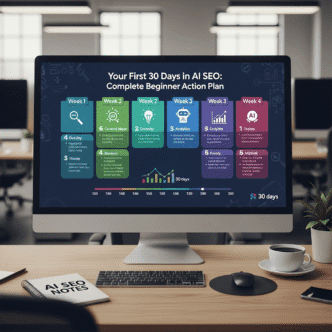

BERT Impact Statistics & Data

Source: aiseojournal.net - Your Authority on AI-Powered SEO

Data compiled from Google Official Research, SEMrush Analysis, Search Engine Journal, BrightEdge Reports & Industry Case Studies (2019-2024)

Interactive educational resource • Updated with latest natural language processing insights